Mastering Character Consistency in GenAI

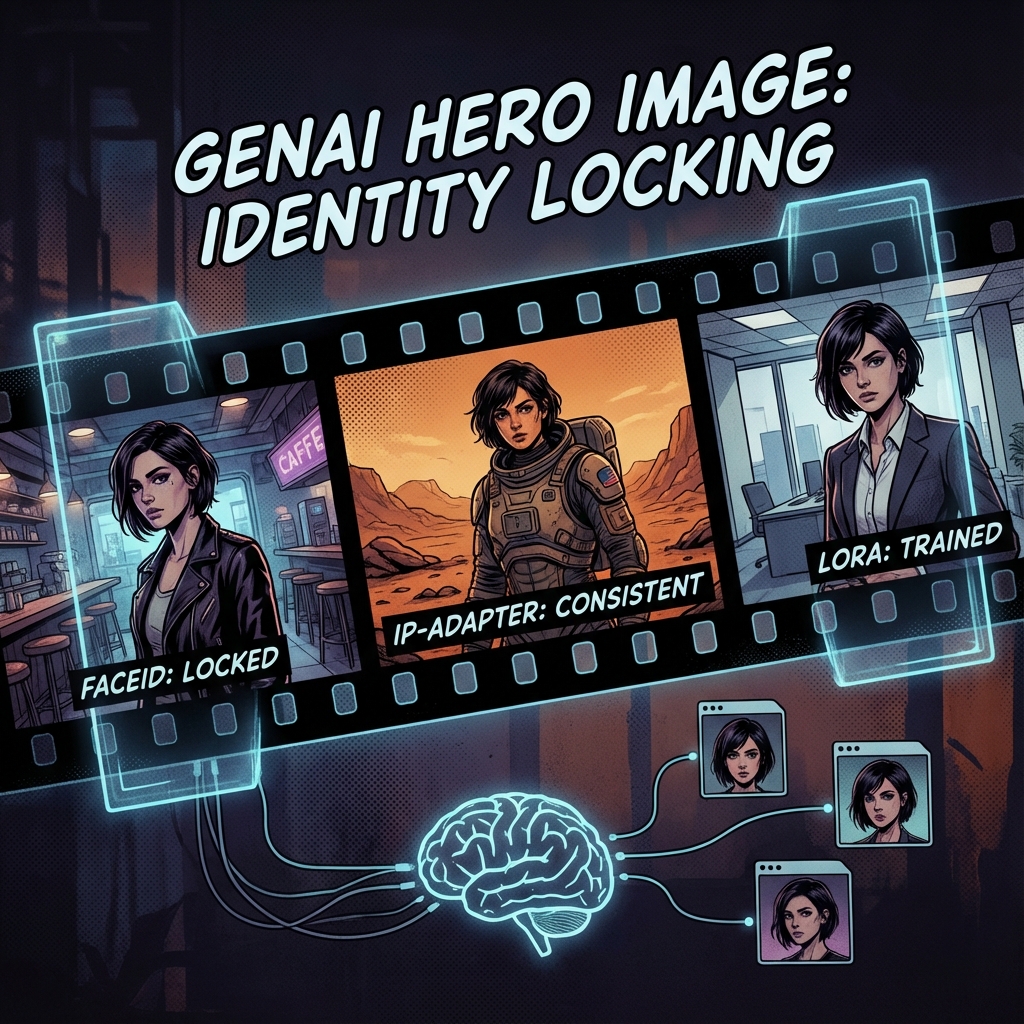

The holy grail of AI storytelling: Keeping a character's face and clothing identical across different scenes, angles, and lighting conditions.

Project Overview

The biggest hurdle for AI comics and movies is that valid AI models behave like a chaotic dream—every generation yields a slightly different person. To solve this, we employ a 'Consistency Stack': combining IP-Adapter (for general features), FaceID (for identity), and LoRA (for specific clothing). This ensures our protagonist 'Alex' looks like 'Alex' whether he's at a cafe or on Mars.

System Architecture

Consistency isn't achieved by one tool, but a layering of constraints. We start with a high-quality 'Reference Sheet' of the character. During generation, we use 'IP-Adapter FaceID Plus' to inject the facial embeddings directly into the model's attention layers, bypassing the text prompt's ambiguity. We essentially 'force' the model to draw the reference face.

Reference Sheet

Grid image showing front, side, and 3/4 views.

IP-Adapter FaceID

Model that transfers facial features from image to image.

OpenPose

ControlNet model to dictate the character's body position.

Inpainting

Fixing small details (eyes, hands) in post-prod.

Implementation Details

Code Example

# Pseudo-code for ComfyUI / Diffusers\npipe.load_ip_adapter("h94/IP-Adapter", subfolder="models", weight_name="ip-adapter-faceid_sdxl.bin")\npipe.set_ip_adapter_scale(0.7)\n\nimage = pipe(\n prompt="Alex wearing a space suit on Mars",\n ip_adapter_image=reference_face_image,\n controlnet_conditioning=pose_map\n).images[0]Agent Memory

Even with image adapters, give your character a unique name in the prompt (e.g., 'ohwx man'). This helps the model's self-attention mechaism correlate the visual identity with a specific text token.

Workflow

Process initiated

Analysis performed

Results delivered

Results & Impact

"Before this stack, we had to photoshop every frame. Now, the AI gets the face right 9 times out of 10."

Brand Identity

Mascots remain recognizable across campaigns.

Speed

No need for finetuning a LoRA for every minor character.

Quality

Retains skin texture and micro-details of the reference.

About the Author

Parmeet Singh Talwar

AI Context Engineer

Apex Neural

Parmeet engineers context-driven AI that combines LLMs with structured backend architecture and multi-platform integrations. He builds AI-powered systems with secure OAuth, fine-tunes open-source LLMs, and integrates image and video generation into production pipelines. Focused on clean design and system reliability.

Ready to Build Your AI Solution?

Get a free consultation and see how we can help transform your business.