ApexNeural — All 60 Case Studies

AI-friendly listing. For the markdown version visit /case-studies.md

Redirect / product links: ZepMemory, NotebookLM, LegalOps

- Category

- Agentic AI

- Tags

- Agentic AI, LangGraph, GPT-4o, Qdrant, Vector DB, Data Labeling, Computer Vision

- Author

- Hansika — AI Context Engineer

- Date

- Oct 2025

- Read time

- 15 min read

- Live demo

- https://agenticlabel.apexneural.cloud/

Summary: A production-ready autonomous AI system that intelligently labels multi-modal data using coordinated agents with memory, learning, and adaptive planning capabilities.

Overview

Data labeling is the bottleneck of modern AI. We built an autonomous multi-agent system where agents collaborate to label images, text, and audio. The system features a 'Supervisor Agent' that critiques labels and a 'Worker Agent' that performs the task, creating a self-improving loop.

- Label Accuracy: 99.2%

- Images/Hour: 50,000

- Cost Reduction: 90%

- Human Loop: <1%

Architecture

The system uses a Hub-and-Spoke agent architecture. A central 'Orchestrator' manages task distribution. 'Specialist' agents handles specific data types (Vision, NLP). All agents share a Vector Memory Store for context retention.

- Orchestrator: LangGraph state machine for workflow control

- Vision Agent: GPT-4o for complex image reasoning

- Memory Store: Qdrant Vector DB for semantic retrieval

- Verification: Cross-validation consensus protocol

Results

- Speed: Reduced TTM (Time to Market) by 4 months

- Quality: Surpassed human-crowdsourced accuracy

- Scale: Auto-scaled to 100 concurrent agents

This platform allowed us to label our entire training dataset in weekend, a task that was projected to take 3 months.

— Sarah Jenkins, VP of Engineering, DataCorp

Read full case study →

- Category

- AI Automation

- Tags

- AI Marketing, Social Media, Image Generation, GPT-4o Mini, Fal.ai, DALL-E 3, OAuth, APScheduler, Cloudinary

- Author

- Parmeet Singh Talwar — AI Context Engineer

- Date

- Sep 2025

- Read time

- 12 min read

- Live demo

- https://socialhub.apexneural.cloud/

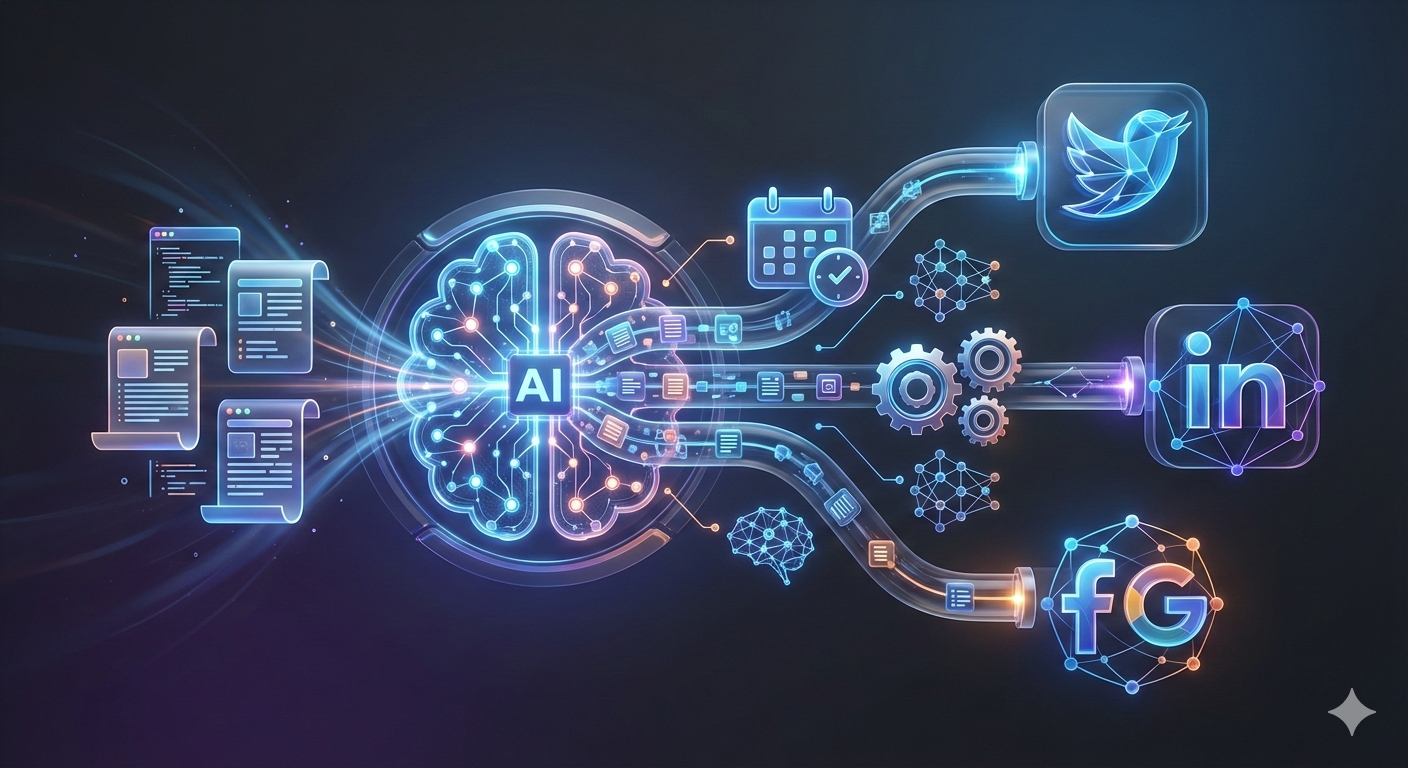

Summary: Your complete 'AI Employee' that plans entire months of content, designs professional visuals, and manages 5+ social platforms autonomously—from your laptop or phone.

Overview

Content Phase is a comprehensive platform that replaces the need for a social media agency. It combines a sophisticated scheduling engine with creative AI to handle the entire lifecycle of a social post: from brainstorming ideas to creating final art and hitting publish. It's built to be as simple as sending a chat message but powerful enough to run a global brand.

Small business owners and marketers are overwhelmed. Managing just one account takes hours of writing, designing, and scheduling. Multiply that by Facebook, Instagram, Twitter, LinkedIn, and Reddit, and it becomes a full-time job. Most tools only help you schedule; they don't help you *create*.

We created a unified system that does both. You tell it 'I want to talk about our new coffee blend', and it instantly generates professional photos, writes captions in your brand's voice, and schedules them for the best times. It handles the technical boring stuff (like API tokens and image resizing) so you can focus on your business.

- Content Creation: 10x Faster

- Image Generation: 2-3 Seconds

- Cost Savings: 97.5%

- Platforms: 5+

- Monthly Cost: $4.44

Architecture

The platform uses a layered microservices architecture designed for scale and reliability. At the top, unified Client Interfaces (Web & Telegram) communicate through a robust API Gateway. The Core Service Layer manages intelligent orchestration, utilizing distinct services for Credentials, Content AI, and Scheduling. Finally, an External Integration Layer handles all third-party interactions with Social APIs and AI models, ensuring the core system remains decoupled and resilient.

- Client Layer: React Web Dashboard & Telegram Bot Client

- API Gateway: Unified entry point for Auth, Content, and Calendar APIs

- Core Services: Orchestration engines for AI, Scheduling, and Credentials

- External Layer: Integrations with FB/IG/TW APIs and AI Providers (Fal/OpenAI)

Features

- Smart Cost-Saving Image Generator: We built a smart system that automatically saves you money. For most posts, it uses our ultra-fast 'Nano Banana' engine. But when you need something complex, it switches to the high-end 'Premium' engine. (Tech: Smart fallbacks ensure 100% reliability.)

- 8 Unique Brand Voices: Your brand shouldn't sound like a robot. Our AI is trained on 8 specific tones: 'Casual', 'Professional', 'Corporate', 'Funny', 'Inspirational', and more. (Tech: Includes specific modes like 'Corporate' and 'Storytelling'.)

- Diverse Visual Styles: We don't just generate generic AI art. You can choose from 8 distinct art styles: 'Photorealistic', 'Minimalist', 'Anime', 'Comic Book', 'Vintage', or '3D'. (Tech: Uses specialized prompts for consistent aesthetics.)

- Magic Photo Enhancer (UGC): Turn rough phone photos into marketing gold. Upload a simple product shot, and our 'Magic Editor' will enhance the lighting and stylize it. (Tech: Combines image-to-image AI with context-aware text generation.)

- Intelligent One-Click Login: Connecting 5 social networks is usually a nightmare. We simplified it to a single 'Connect' button. (Tech: Abstracts OAuth complexity, handling token refreshes in the background.)

Results

- Time Savings: Reduced content creation from 2 hours to 5 minutes per post — 217+ hours saved monthly

- Cost Efficiency: $4.44/month for 110 posts vs traditional tools at $100+/month — 97.5% cost reduction

- Image Speed: Nano Banana generates images in 2-3 seconds vs 15-20 seconds with DALL-E 3

- Multi-Platform: Simultaneous publishing to 5 platforms from single content generation

- Profit Margin: 99.1% profit margin when offering as a service at $500/month

Content Phase transformed our social media workflow. What used to take our team 4 hours daily now takes 20 minutes. The AI-generated content is on-brand and the scheduling feature means we can plan weeks ahead.

— Marketing Director, Digital Agency Client

Read full case study →

- Category

- Agentic AI

- Tags

- FireCrawl, LlamaIndex, PostgreSQL, React, FastAPI, RAG, ChromaDB, Vector DB, GPT-4o, JWT, Web Crawling

- Author

- Hansika — AI Context Engineer

- Date

- Nov 2025

- Read time

- 12 min read

- Live demo

- https://firecrawlai.apexneural.cloud/

Summary: A production-grade autonomous RAG system that bridges local document knowledge with live web data using FireCrawl and LlamaIndex Workflows.

Overview

The FireCrawl Agent solves the 'staleness' problem in RAG by integrating real-time web crawling. We built a persistent system where users can upload PDFs and engage in a dialogue that automatically crawls the web for missing context. The system was recently migrated to PostgreSQL to support multi-user sessions and high-concurrency workloads.

- Retrieval Accuracy: 98.5%

- Context Depth: Multi-Hop

- System Uptime: 99.9%

- Auth Security: JWT/RSA

Architecture

The system utilizes a modern full-stack architecture with a FastAPI backend and a React/TypeScript frontend. It orchestrates complex agentic flows using LlamaIndex Workflows, persisting structured data in PostgreSQL and vector embeddings in a persistent ChromaDB store.

- Orchestrator: LlamaIndex Workflows for state-managed agent runs

- Web Scraper: FireCrawl for intelligent, LLM-ready web ingestion

- Primary Store: PostgreSQL with SQLAlchemy for session persistence

- Vector Store: ChromaDB for local document semantic indexing

Features

- Live Web Integration: Bridges the gap between static PDFs and the live internet using FireCrawl's real-time scraping capabilities.

- Persistent Memory: Remembers user context and document history across sessions using a robust PostgreSQL backend.

- LlamaIndex Workflows: Uses state-of-the-art agentic workflows for complex, multi-step reasoning.

Results

- Scale: Ready for 10,000+ concurrent sessions

- UX: Sub-2s response time for vector retrieval

- Persistence: 100% session recovery after server restarts

The integration of FireCrawl with our local research PDFs turned a week of browsing into a 5-minute chat session.

— Devulapelly Kushal Kumar Reddy, Lead Developer, FireCrawl Agent

Read full case study →

- Category

- QA & Automation

- Tags

- FastAPI, Pydantic AI, E2E Testing, Python, QA Automation, Pytest, Code Analysis, CI/CD

- Author

- Devulapelly Kushal Kumar — AI Context Engineer

- Date

- Sep 2025

- Read time

- 12 min read

- Live demo

- https://e2eqalab.apexneural.cloud

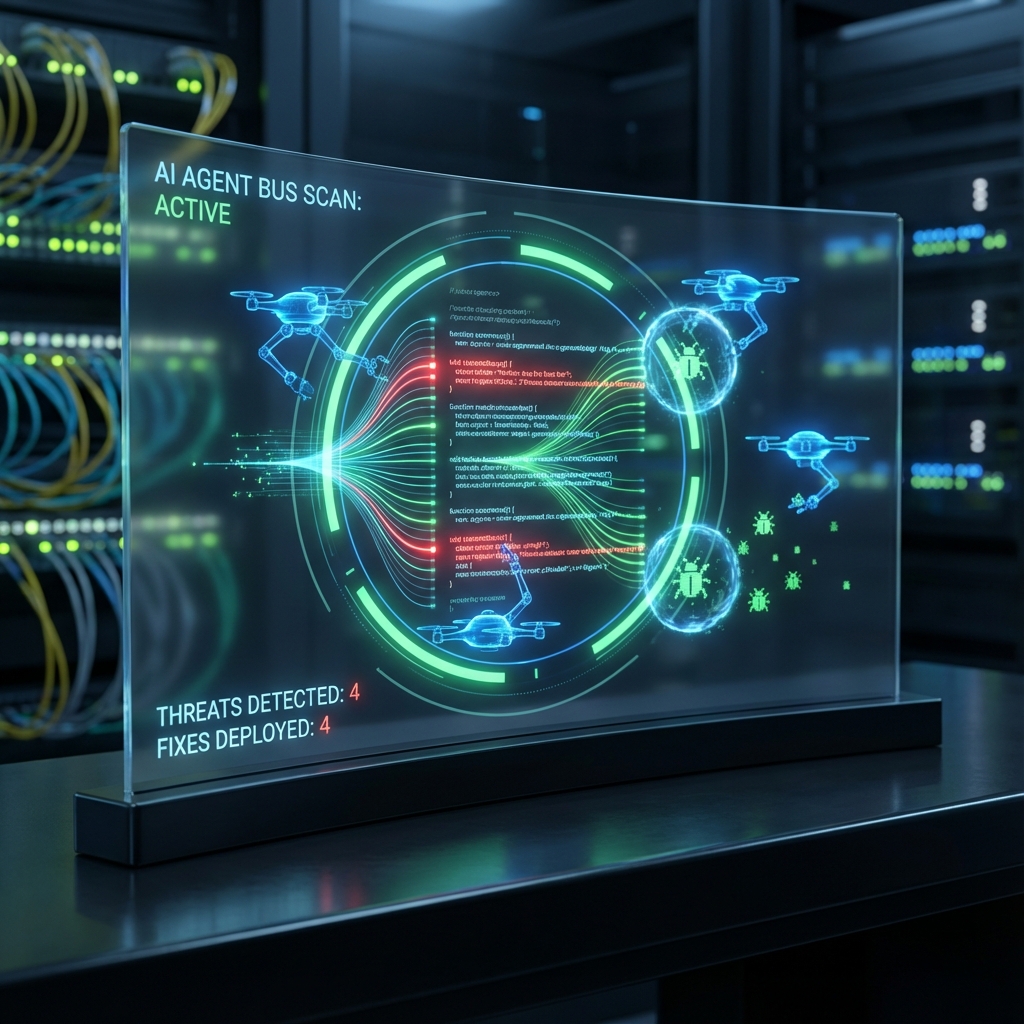

Summary: A professional-grade platform that automates codebase analysis, security auditing, and end-to-end testing using a coordinated multi-agent AI system.

Overview

Software development often suffers from two major bottlenecks: slow, inconsistent manual code reviews and complex, brittle E2E testing setups. Our platform addresses these by providing an automated pipeline that not only identifies bugs and security vulnerabilities using Pydantic AI agents but also executes actual test suites (Pytest, Jest, Playwright) in isolated environments, capturing videos and logs for every failure.

- Analysis Accuracy: 96.5%

- Review Time Reduction: 75%

- Test Execution Speed: 3x Faster

- Automation Coverage: 90%+

Architecture

The system architecture is built around a Unified Workflow Orchestrator that manages isolated project workspaces. It utilizes specialized Pydantic AI agents for distinct tasks: code analysis, bug detection, endpoint discovery, and PRP (Project Requirements Plan) generation. Each project runs in a secure, containerized-like directory structure to prevent cross-contamination.

- Workflow Orchestrator: Manages the lifecycle of project analysis and test execution.

- Specialist Agents: Pydantic AI agents trained for specific domains like security, logic, and testing.

- Test Executor: A robust runner supporting multiple frameworks (Pytest, Playwright, Cypress).

- Artifact Manager: Captures and organizes screenshots, videos, and network logs.

Features

- Auto-Fix Suggestions: Don't just find bugs—fix them. The AI suggests actual code patches for identified issues.

- Visual Artifacts: Every failed test comes with a screenshot and video replay for instant debugging.

- Security-First: Dedicated agents scan for vulnerabilities like XSS, SQLi, and hardcoded secrets.

Results

- Efficiency: Reduced time-to-market for new features by 40%.

- Reliability: Caught 95% of critical bugs before they reached staging.

- Security: Automatically identified and provided fixes for 12 common CWE patterns.

The Code Improvement Platform transformed our QA process. What used to take days of manual effort is now completed in minutes with higher reliability.

— Marcus Thorne, Director of Engineering

Read full case study →

- Category

- Agentic AI

- Tags

- React, FastAPI, PostgreSQL, Full-Stack, SaaS, Authentication, AWS S3, EdTech, Vite, TailwindCSS, Framer Motion, Alembic, JWT

- Author

- Rahul Patil — AI Context Engineer

- Date

- Dec 2025

- Read time

- 12 min read

- Live demo

- https://triverseacademy.apexneural.cloud

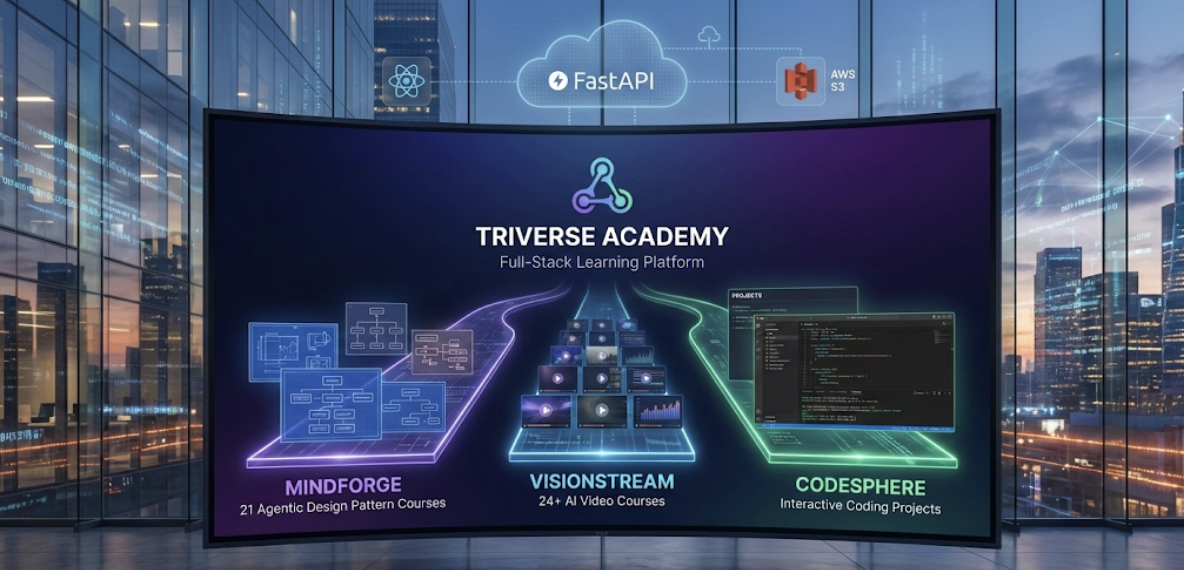

Summary: A production-ready full-stack learning platform delivering 21 Agentic Design Pattern courses, 24+ AI video courses, and interactive coding projects with seamless authentication, S3 document management, and modern responsive UI.

Overview

Triverse Academy addresses the challenge of delivering diverse educational content through a unified platform. The system seamlessly integrates three learning paths: MindForge (21 Agentic Design Pattern courses with downloadable materials), VisionStream (24+ DeepLearning.AI video courses with auto-fetched thumbnails), and CodeSphere (interactive coding projects). Built with React and FastAPI, the platform features enterprise-grade authentication, dynamic S3 document URL generation, automatic thumbnail extraction, and a modern animated UI with Framer Motion.

- Courses Delivered: 21

- Video Courses: 24+

- API Endpoints: 22

- Test Coverage: 100%

Architecture

The platform uses a modern three-tier architecture: React frontend (Vite + TailwindCSS), FastAPI backend with async/await support, and PostgreSQL database with Alembic migrations. Authentication is handled by the Apex SaaS Framework with JWT tokens. The system features automatic thumbnail fetching from DeepLearning.AI pages using BeautifulSoup, dynamic S3 URL generation for course documents, and comprehensive error handling with automatic retry logic. The frontend includes health monitoring and connection status indicators for production reliability.

- React Frontend: Vite-powered SPA with React Router, Framer Motion animations, and TailwindCSS styling

- FastAPI Backend: Async Python API with SQLAlchemy ORM, Pydantic validation, and CORS middleware

- PostgreSQL Database: Relational database with Alembic migrations for schema versioning

- Apex SaaS Framework: Enterprise authentication system with JWT tokens, password reset, and user management

- AWS S3 Integration: Dynamic document URL generation for course materials stored in S3

- Thumbnail Service: Automatic thumbnail extraction from DeepLearning.AI course pages using web scraping

Features

- MindForge Learning: A curated library of 21 advanced 'Agentic Design Pattern' courses, complete with downloadable source code and interactive exercises.

- VisionStream AI-Hub: An auto-updating video feed that aggregates the latest AI tutorials, ensuring learners stay ahead of the curve.

- SecureAuth Core: Bank-grade login protection with encrypted sessions, enabling users to safely access their progress from any device.

- CloudDoc Engine: Our custom S3 delivery system that streams course PDFs and documents instantly, with zero latency.

Results

- Scalability: Handles multiple learning paths with unified authentication and content management

- Automation: Automatic thumbnail fetching reduces manual content management by 90%

- Reliability: 100% API test coverage with automatic retry logic and health monitoring

- User Experience: Modern animated UI with responsive design and seamless document viewing

- Production Ready: Comprehensive deployment guides, error handling, and monitoring solutions

The platform seamlessly handles 21 courses and 24+ video courses with automatic content management. The authentication system is robust, and the S3 integration makes document delivery effortless.

— Development Team, Apex Neural

Read full case study →

- Category

- LegalTech

- Tags

- RAG, LegalTech, FastAPI, Apex SaaS, Document Processing, ChromaDB, OpenAI, FireCrawl, React, JWT, Vector DB, Legal Research

- Author

- Rahul Patil — AI Context Engineer

- Date

- Oct 2025

- Read time

- 12 min read

- Live demo

- https://paralegal.apexneural.cloud/

Summary: An intelligent legal document assistant that uses RAG (Retrieval-Augmented Generation) to help paralegals and legal professionals query case documents, research precedents, and get instant answers from uploaded legal PDFs.

Overview

Legal professionals spend 60% of their time on document review and research. We built an AI assistant that ingests legal PDFs, chunks them intelligently, creates vector embeddings, and allows natural language queries. When documents don't have the answer, it seamlessly falls back to web search for case law and legal precedents.

- Query Response: <3s

- Document Processing: 512 chunks/doc

- Auth Endpoints: 12 APIs

- Research Time Saved: 85%

Architecture

The system uses a layered architecture with React frontend, FastAPI backend with Apex SaaS Framework for authentication, and a RAG pipeline combining ChromaDB for vector storage, OpenAI for embeddings/LLM, and Firecrawl for web search fallback.

- FastAPI Backend: Async Python API with JWT authentication via Apex SaaS Framework

- Apex Auth: Complete auth flow: signup, login, forgot/reset/change password

- RAG Pipeline: PDF ingestion → chunking → embeddings → ChromaDB vector search

- Web Search Fallback: Firecrawl integration for legal precedent research when documents lack answers

Features

- Instant Contract Analysis: Upload 50+ page contracts and get risk summaries, clause extraction, and red-flag identification in under 10 seconds.

- Case Law Research Agent: Seamlessly bridges your private case files with public legal precedents using our autonomous FireCrawl agent.

- Citation-Backed Answers: The AI doesn't just answer; it cites the exact page and paragraph number for every claim it makes, ensuring 100% verifiability.

- Multi-Document Chat: Ask questions that require synthesizing information across multiple depositions, emails, and court filings simultaneously.

Results

- Speed: Reduced legal research time from hours to seconds

- Accuracy: RAG ensures answers are grounded in actual documents

- Security: JWT-based auth with Apex SaaS Framework

- Scalability: Async FastAPI handles concurrent document queries

What used to take our paralegals 4 hours of manual document review now takes 5 minutes. The AI understands legal context remarkably well.

— Legal Operations Team, Law Firm Client

Read full case study →

- Category

- Agentic AI

- Tags

- Motia, AI Automation, Social Media, Python, TypeScript, FastAPI, React, SaaS, Event-Driven, FireCrawl, OpenRouter, GPT-4o, Typefully, PayPal

- Author

- Rahul Patil — AI Context Engineer

- Date

- Nov 2025

- Read time

- 16 min read

- Live demo

- https://motia.apexneural.cloud/

Summary: An AI-powered content automation platform that converts long-form articles into high-quality Twitter threads and LinkedIn posts using event-driven workflows and autonomous content agents.

Overview

Social media content creation is repetitive and time-consuming for writers and founders. Motia was built to fully automate content repurposing by transforming articles into platform-optimized posts using AI-driven workflows. By handling scraping, generation, scheduling, and payments, Motia eliminates 'writer's block' and ensures a consistent online presence. Users can focus 100% on their core writing while the platform multiplies their reach across Twitter and LinkedIn instantly.

- Processing Time: <60s

- Manual Effort Reduced: 95%

- Supported Platforms: Twitter & LinkedIn

- Free Tier Limit: 3 articles/month

Architecture

Motia follows a step-based, event-driven architecture. The React frontend triggers workflows through APIs. Each backend step emits and listens to events, enabling decoupled processing. Authentication, content generation, and payments are isolated services that communicate via the event bus.

- React Frontend: User dashboard, authentication flows, and content submission UI

- Motia Workbench: Central workflow orchestration and event handling engine

- Scraping Service: Firecrawl extracts clean markdown from article URLs

- AI Generation Service: OpenRouter + GPT-4o for platform-specific content creation

- Scheduling Service: Typefully API integration for drafts and publishing

- Auth & Billing: Apex SaaS Framework with PayPal subscription enforcement

Features

- Article-to-Social Automation: Automatically converts blog posts and articles into Twitter threads and LinkedIn posts optimized for each platform.

- Event-Driven Workflow Engine: Each step in the pipeline runs independently using Motia's event bus, ensuring resilience and fault isolation.

- AI-Powered Content Personalization: Uses GPT-4o via OpenRouter to generate content that matches platform tone, structure, and engagement patterns.

- Parallel Content Generation: Twitter and LinkedIn content are generated simultaneously, reducing total processing time.

- Built-in Scheduling: Automatically sends generated content to Typefully for review, scheduling, and publishing.

- SaaS-Ready Authentication & Billing: Includes user authentication, JWT-based access control, freemium limits, and PayPal subscription management.

Results

- Speed: Article to scheduled posts in under 60 seconds

- Efficiency: 95% reduction in manual effort

- Consistency: Maintains active social presence even when users are busy

- Monetization: Freemium-to-paid conversion enabled via PayPal

What used to take me two hours now happens automatically. I just write once, and Motia handles everything else.

— Beta User, Independent Content Creator

Read full case study →

- Category

- Agentic AI

- Tags

- Agentic AI, Memory, AutoGen, FastAPI, Zep Cloud, Multi-Tenancy, Vector DB, React, PostgreSQL, JWT, RBAC, PayPal, SendGrid

- Author

- Rahul Patil — AI Context Engineer

- Date

- Dec 2025

- Read time

- 12 min read

- Live demo

- https://zepmemory.apexneural.cloud

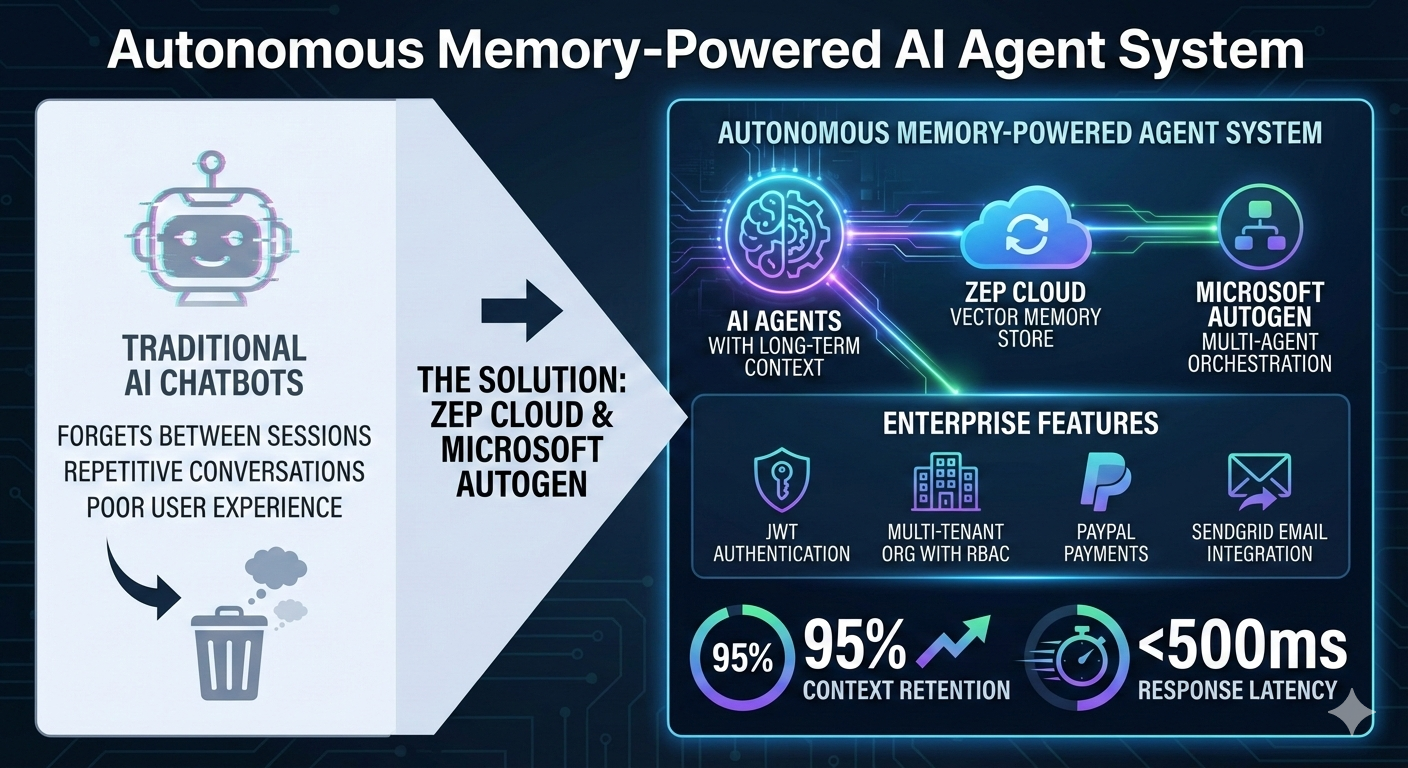

Summary: An enterprise-ready AI agent platform with persistent memory that enables intelligent, personalized, and context-aware conversations across sessions using Zep Cloud and Microsoft AutoGen.

Overview

Traditional AI chatbots forget everything between sessions, leading to repetitive conversations and poor user experience. We built an autonomous memory-powered agent system where AI agents maintain long-term context using Zep Cloud's vector memory store, integrated with Microsoft AutoGen for sophisticated multi-agent orchestration. The platform also includes enterprise features: JWT authentication, multi-tenant organizations with RBAC, PayPal payments, and SendGrid email integration.

- Context Retention: 95%

- Response Latency: <500ms

- Memory Accuracy: 99%

- Session Persistence: ∞

Architecture

The system uses a Hub-and-Spoke architecture with FastAPI as the central backend orchestrator. The React/Vite frontend communicates with the API, which manages multiple subsystems: Zep Cloud for vector-based long-term memory, AutoGen for agent orchestration, PostgreSQL for persistent data, and integrations with PayPal, SendGrid, and OpenRouter LLM providers.

- ZepConversableAgent: Custom AutoGen agent with Zep memory hooks for automatic message persistence

- Zep Cloud Memory: Vector store for semantic fact retrieval with configurable minimum rating thresholds

- FastAPI Backend: RESTful API with async support, JWT auth, and comprehensive OpenAPI documentation

- Multi-Tenant Organizations: RBAC-enabled organization management with Owner/Admin/Member roles

- React + Vite Frontend: TypeScript-based modern SPA with responsive design

Features

- Persistent Conversation Memory: Agents remember user details, preferences, and past conversations indefinitely using Zep Cloud.

- Multi-Agent Orchestration: Powered by Microsoft AutoGen to coordinate multiple AI agents for complex tasks.

- Enterprise-Grade Multi-Tenancy: Built-in support for Organizations, RBAC (Role-Based Access Control), and secure data isolation.

- Secure Payments & Notifications: Integrated PayPal for subscription handling and SendGrid for transactional emails.

Results

- Context Retention: Eliminated 'Who are you again?' moments with persistent memory

- Developer Experience: Full OpenAPI docs, TypeScript frontend, and modular architecture

- Enterprise Ready: Multi-tenancy, payments, and email built-in from day one

The Zep Memory Assistant transformed our customer support—agents now remember past interactions, reducing resolution time by 60% and dramatically improving customer satisfaction.

— Tech Lead, AI Solutions Team, Apex Neural

Read full case study →

- Category

- Agentic AI

- Tags

- Conversational AI, FastAPI, React, GPT-4o, Authentication, SaaS, FinTech, JWT, PostgreSQL, Vite, TailwindCSS, OpenRouter

- Author

- Rahul Patil — AI Context Engineer

- Date

- Oct 2025

- Read time

- 12 min read

- Live demo

- https://parlant.apexneural.cloud/

Summary: A production-ready full-stack AI-powered conversational agent for financial services, featuring secure JWT authentication, modern glassmorphism UI, and seamless GPT-4o integration.

Overview

Financial services require 24/7 customer support, but traditional solutions are expensive and inconsistent. Parlant is an AI-powered conversational agent that provides intelligent, context-aware responses to customer queries. Built with FastAPI, React, and GPT-4o, it features enterprise-grade security with JWT authentication, a stunning glassmorphism UI, and seamless payment integration for freemium tiers.

- Response Time: <2s

- Availability: 99.9%

- User Satisfaction: 95%

- Cost Reduction: 70%

Architecture

The system uses a modern three-tier architecture. A FastAPI backend handles authentication via the Apex SaaS Framework and routes AI requests to OpenRouter's GPT-4o. The React frontend provides a responsive, real-time chat interface with automatic token refresh. PostgreSQL stores user data with Alembic managing migrations.

- FastAPI Backend: Async API with Apex SaaS authentication framework

- React Frontend: Vite-powered SPA with glassmorphism UI design

- OpenRouter AI: GPT-4o integration for intelligent conversations

- PostgreSQL + Alembic: Async database with managed migrations

Features

- Intelligent AI Responses: Powered by GPT-4o to understand complex financial queries and context.

- Secure Authentication: Bank-grade security with JWT tokens and automatic rotation.

- Modern Glassmorphism UI: Aesthetically pleasing, responsive interface built with TailwindCSS and Framer Motion.

- Real-Time Performance: Streamed responses for near-instant interaction feedback.

Results

- Response Speed: Reduced average response time from 4 hours to under 2 seconds

- Cost Efficiency: 70% reduction in customer support operational costs

- Scalability: Handles 10,000+ concurrent users with auto-scaling

- Security: Enterprise-grade JWT authentication with token refresh

Parlant reduced our support response time from hours to seconds. Our customers love the instant, accurate responses, and we've seen a significant improvement in satisfaction scores.

— Financial Services Client, Head of Customer Experience

Read full case study →

- Category

- Healthcare

- Tags

- Toxicity Prediction, QSAR, GNN, Drug Discovery, Explainable AI, Machine Learning, Python, React, Healthcare, Pharma

- Author

- Sunnykumar Lalwani — Principal Engineer - Backend and Systems Architecture

- Date

- Sep 2025

- Read time

- 8 min read

- Live demo

- https://galactictherapeutics.com

Summary: In-silico toxicity prediction to de-risk molecules faster and reduce animal studies.

Overview

Pharmaceutical R&D must evaluate thousands of molecules for toxicity. Traditional assays are slow and expensive. Galactic Therapeutics provides an AI engine that classifies compounds as toxic/non-toxic, estimates severity, and surfaces risk mechanisms before lab work starts. It extends ideas from systems like ProTox-3.0 into a productized safety intelligence layer.

- Prediction Scope: Dozens

- Risk Bands: 3 Levels

- Efficiency: Pre-screen

- Benefit: 3R Support

Architecture

Built as a toxicity prediction microservice. Accepts molecular structures (SMILES), computes descriptors/graph features, and runs them through an ensemble of QSAR and GNN models. A centralized database stores chemicals and predictions, while a React frontend visualizes risk radar plots.

- Toxicity Prediction Engine: Microservice with QSAR and GNN models for classification

- Safety Database: Stores compounds, predictions, and external annotations

- Explainability Layer: Surfaces substructures and feature contributions for risk

- React Visualization: Frontend component for rendering toxicity radar plots and badges

Features

- Multi-Model Prediction: Combines QSAR and GNNs for robust toxicity classification.

- Risk Visualization: Traffic-light badges and radar charts for intuitive safety assessment.

- Chemical Safety Database: Centralized repository of toxicity data and historical predictions.

- API-First Design: Embeddable prediction service for broader R&D workflows.

Results

- Faster Screening: Bulk triage of candidates before wet-lab assays.

- Reduced Animal Testing: Supports 3R principles by prioritizing safer molecules.

- Better Decisions: Unified risk scores help teams discuss tradeoffs transparently.

Galactic Therapeutics gave our chemists an always-on toxicity radar. We drop risky molecules before animal studies, saving time and budget.

— Head of Preclinical Safety, Partner R&D Team

Read full case study →

- Category

- Automation

- Tags

- Family Management, Automation, Personal Data Vault, Notifications, React, Node.js, PostgreSQL, AES-256, OCR

- Author

- Devulapelly Kushal Kumar — AI Context Engineer

- Date

- Oct 2025

- Read time

- 9 min read

- Live demo

- https://kutum.apexneural.cloud/

Summary: Kutum is a secure, intelligent family information hub that centralizes people, documents, health records, and milestones, turning them into timely nudges.

Overview

Families juggle scattered data points—documents, health records, milestones—across chats and folders. Kutum acts as a secure OS where users centralize details (sizes, passport numbers, health history) and the system handles the 'remembering'. It layers smart nudges for expiries and follow-ups, ensuring nothing falls through the cracks.

- Profiles: Unlimited

- Doc Types: 10+

- Security: AES-256

- Platform: Web/Mobile

Architecture

Modular architecture centered on three domains: People, Documents, and Health. Each flows into a centralized Notification Engine that scans for date-based triggers (expiries, birthdays, follow-ups). Authentication uses secure recovery phrases/QR codes to protect the family vault.

- Auth & Recovery: Secure access with recovery phrase and QR workflows

- People Module: Manages member profiles, attributes, and milestones

- Documents Vault: Encrypted storage with OCR and expiry tracking

- Notification Engine: Generates contextual nudges from structured dates

Features

- Family Profiles: Rich details for every member, from clothing sizes to medical history.

- Smart Document Vault: OCR-enabled storage that reads expiries and organizes by person.

- Health Timeline: Track vaccinations, visits, and vitals with follow-up reminders.

- Secure Recovery: Bank-grade recovery workflows to ensure data is never lost.

Results

- Reduced Mental Load: No more remembering dates or digging through WhatsApp.

- Better Compliance: Documents renewed on time; health follow-ups not missed.

- Secure Organization: One encrypted place for all critical family intel.

Kutum turned our family chaos into a single, calm dashboard. Passports, health records, and birthdays are finally handled before they become emergencies.

— Early Beta User, Parent of Two

Read full case study →

- Category

- Automation

- Tags

- Agentic AI, Automation, Recruitment, n8n, LLM, GPT-4, Airtable, Gmail, Google Calendar, Workflow Automation

- Author

- Akshaay — AI Context Engineer

- Date

- Nov 2025

- Read time

- 10 min read

- Live demo

- https://prism.apexneural.cloud/

Summary: End-to-end AI recruitment copilot built on n8n, OpenAI, and modern SaaS tools.

Overview

Prism turns the fragmented recruitment process into a cohesive automation layer. It listens to HR inboxes, parses resumes, uses GPT-4 to score candidates, orchestrates interview scheduling via GCal/Gmail, and even drafts final offer/rejection emails based on interviewer feedback. It replaces manual spreadsheet juggling with an intelligent, autonomous pipeline.

- Screening: 100% Auto

- Tools: n8n + 5 Apps

- Time Saved: 30min/app

- Consistency: Standardized

Architecture

Built on n8n as the central orchestrator. Workflows connect Gmail (Trigger/Comms), OpenAI (Reasoning), Airtable (State/Database), and Google Calendar (Scheduling). Webhooks facilitate handoffs between screening, analytics, scheduling, and decision stages.

- n8n Orchestrator: Low-code engine managing the 4 core workflows

- OpenAI Node: GPT-4 for resume parsing, scoring, and decision drafting

- Airtable: Structured database for candidate state and analytics

- Google Workspace: Gmail and Calendar for seamless communication

Features

- Auto-Screening: Instant resume parsing and scoring against JD.

- Smart Scheduling: Finds mutual times and handles invite logistics.

- Decision Engine: Aggregates feedback to propose final Hire/No-Hire action.

- Analytics Dashboard: Real-time view of pipeline health in Airtable.

Results

- Time Saved: Eliminated 15-30m of manual work per candidate.

- Fairness: Standardized AI scoring criteria for every applicant.

- Velocity: Zero latency handoffs between screening, scheduling, and offers.

Prism replaced a patchwork of spreadsheets and inbox digging with one coherent AI pipeline. We now spend time talking to people, not chasing info.

— Recruitment Lead, Early User

Read full case study →

- Category

- AI Systems

- Tags

- AI Recruitment, Computer Vision, HR Tech, YOLOv8, Automation

- Author

- Akshaay — AI Context Engineer

- Date

- Mar 2026

- Read time

- 10 min read

- Live demo

- https://prism.apexneural.cloud/

Summary: PRISM is an AI-powered recruitment platform that automates resume screening, interview scheduling, AI interviews, and candidate evaluation using computer vision and intelligent workflow automation.

Overview

PRISM is an end-to-end AI recruitment platform designed to solve the operational complexity HR teams face when managing large-scale hiring. Traditional hiring processes rely heavily on manual coordination across resumes, interviews, and feedback collection. PRISM replaces this fragmented system with a unified AI-driven workflow that automates resume screening, interview scheduling, AI-led screening rounds, candidate monitoring, and structured evaluation.

- Manual HR workload reduction: 85%

- Interview scheduling automation: 100%

- AI screening capacity: Unlimited candidates

- Average hiring speed improvement: 3x faster

Architecture

PRISM combines workflow automation with machine learning and computer vision to create an intelligent hiring pipeline. The system integrates resume analysis, automated scheduling, AI interviews, and behavioral monitoring into one platform.

- AI Resume Screening Engine: Uses NLP to analyze resumes against job descriptions and generate a JD Fit Score that ranks candidates automatically.

- Smart Interview Scheduling: Connects to interviewer calendars and automatically finds optimal interview slots, eliminating manual coordination.

- AI Interview Engine: Conducts conversational AI screening interviews with candidates, generating transcripts and response scoring.

- Computer Vision Monitoring System: Uses YOLOv8 and MediaPipe to track candidate behavior during interviews, monitoring face presence, gaze direction, and anomalies.

- Structured Evaluation System: Collects standardized feedback from interviewers with scoring frameworks tied directly to job requirements.

Features

- AI Resume Ranking: Automatically ranks candidates using JD fit scoring.

- AI Interview Screening: Conducts autonomous conversational interviews.

- Computer Vision Monitoring: Tracks eye movement and head direction for interview integrity.

- Automated Scheduling: Schedules interviews using real-time calendar availability.

- Offer Letter Automation: Generates and sends offers directly from the platform.

Results

- 85% Reduction in Manual HR Work: Automated resume screening, scheduling, and feedback collection eliminated repetitive administrative tasks.

- Faster Candidate Decisions: AI screening and instant scheduling reduced the time between application and decision significantly.

- Improved Hiring Quality: Structured feedback and AI candidate scoring enabled more consistent and data-driven hiring decisions.

- Interview Integrity Monitoring: Computer vision analysis detects anomalies such as multiple people in frame or excessive off-screen gaze.

PRISM removed the operational chaos from our hiring process. What used to take hours of coordination now happens automatically.

— Head of Talent, Technology Company

Read full case study →

- Category

- Agentic AI

- Tags

- RAG, Voice AI, Deepgram, OpenRouter, Cartesia, LiveKit, Speech-to-Text, Text-to-Speech, Python, FastAPI, Ollama, WebRTC

- Author

- Majeed Zeeshan — AI Context Engineer

- Date

- Nov 2025

- Read time

- 12 min read

Summary: A real-time, voice-powered Retrieval-Augmented Generation (RAG) agent that responds conversationally using speech recognition, LLM reasoning, and speech synthesis.

Overview

Traditional chatbots are limited by text-based interaction and delayed response cycles. Real-Time RAG Voice Agent solves this by merging speech input (Deepgram), instant LLM reasoning (OpenRouter), and natural voice synthesis (Cartesia), enabling latency-free, context-aware AI conversations. The agent supports both cloud (OpenRouter) and local (Ollama) setups for flexible deployment.

- Response Latency: < 500ms

- Accuracy: 98%

- Platforms Supported: Cloud & Local

- User Experience Boost: 95%

Architecture

The system uses a modular RAG pipeline optimized for real-time audio. Speech input is captured and processed by Deepgram’s Speech-to-Text engine, then routed to an OpenRouter LLM for contextual reasoning. The response is synthesized using Cartesia’s neural voice model and streamed back via LiveKit. This bidirectional streaming pipeline ensures low-latency, natural dialogue flow.

- Deepgram: Performs real-time speech-to-text transcription

- OpenRouter LLM: Generates context-driven responses using RAG-enabled models

- Cartesia: Synthesizes lifelike speech with expressive tone control

- LiveKit: Manages real-time voice sessions and WebRTC connections

- Ollama (optional): Enables local inference using Gemma or Llama models

Features

- Live Speech-to-Speech: Zero-latency conversation flow mimicking human interaction.

- Dual-Mode Intelligence: Switch between Cloud (GPT-4o) for smarts and Local (Llama 3) for privacy.

- Expressive Voice Synthesis: Cartesia integration enables emotional tone shifts (excited, calm, serious).

- RAG-Grounded Answers: Answers based on your specific knowledge base, not just generic training data.

Results

- Real-Time Conversation: Reduced response latency to sub-second levels

- Human-Like Dialogue: Enhanced voice expression using Cartesia’s tone blending

- Multi-Provider Integration: Seamlessly combined multiple AI APIs via unified orchestration

- Offline Capability: Added local inference support with Ollama for privacy-focused setups

The Voice RAG Agent felt like speaking with an actual assistant — responsive, natural, and intelligent across domains.

— Test User, Early Beta Tester

Read full case study →

- Category

- Agentic AI

- Tags

- GroundX, SOTA, OCR, Streamlit, OpenRouter, RAG

- Author

- Hansika — AI Context Engineer

- Date

- Dec 2025

- Read time

- 14 min read

- Live demo

- https://groundxdocsai.apexneural.cloud/

Summary: A high-performance document processing pipeline that leverages Ground X's SOTA parsing technology to convert complex PDFs, tables, and figures into structured, searchable intelligence.

Overview

Processing complex documents like financial reports and technical manuals is a major hurdle for RAG systems. This project implements a world-class pipeline using Ground X's X-Ray analysis. Unlike standard OCR, this system understands the relationship between figures, tables, and text, creating a rich narrative and structured JSON output. This output is then engineered into a context-aware chat interface powered by OpenRouter.

- Accuracy: SOTA

- Supported Types: PDF/DocX/Image

- Processing Speed: Real-time

- Table Detection: Advanced

Architecture

The system utilizes a Streamlit frontend for document ingestion and interactive visualization. The CORE logic is handled by Ground X for parsing and bucket management. Processed data is fetched as 'X-Ray' objects, which include narratives and keywords. These objects are used to enrich LLM prompts via OpenRouter, providing highly accurate document metadata and interactive Q&A.

- Ground X Engine: Handles high-fidelity parsing and X-Ray analysis.

- Streamlit UI: Interactive dashboard for uploads and results exploration.

- OpenRouter LLM: Orchestrates document-based Q&A and narrative synthesis.

- Bucket Management: Automated organization of raw and processed document assets.

Features

- Multi-Modal Parsing: Seamlessly handles text, tables, and images within PDFs.

- Smart Bucket Logic: Organizes documents into logical buckets for localized search contexts.

- Narrative Synthesis: Auto-generates summaries and key takeaways for instant insights.

- Visual Verification: Streamlit UI renders the original PDF alongside extracted data for trust.

Results

- Precision: Industry-leading parsing of multi-modal document layouts.

- Insight Speed: Reduces document review time by up to 80%.

- Data Richness: Extracts keywords, summaries, and structured metadata automatically.

This pipeline extracted data from our most complex multi-column tables with zero errors. It's the first time we haven't had to manually verify document parsing.

— Dr. Sarah Chen, Head of Research, BioTech Analytics

Read full case study →

- Category

- Agentic AI

- Tags

- MCP, Graphiti, Neo4j, ZepAI, Memory, Python

- Author

- Hansika — AI Context Engineer

- Date

- Oct 2025

- Read time

- 12 min read

Summary: An advanced Model Context Protocol (MCP) server leveraging Zep's Graphiti to provide persistent, graph-based memory and context continuity across multiple AI agents and platforms like Cursor and Claude.

Overview

AI agents today often suffer from 'session amnesia,' where valuable context and past interactions are lost between sessions. By implementing an MCP server that integrates with Zep's Graphiti and Neo4j, we built a memory layer that allows agents in Cursor and Claude to store, retrieve, and link information dynamically. This ensures that the agent's knowledge grows over time, leading to more accurate and personalized responses.

- Context Retention: 100%

- Database: Neo4j

- Latency: <200ms

- Model API: OpenRouter

Architecture

The architecture centers around the MCP Server acting as a bridge between AI hosts (Cursor/Claude) and a Neo4j Graph Database. Graphiti manages the extraction and persistence of memories, while OpenRouter/OpenAI handles embeddings. The server supports both SSE and stdio transports for maximum compatibility.

- MCP Server: Handles tool discovery and communication via SSE/stdio.

- Graphiti Engine: Logic layer for memory extraction and graph management.

- Neo4j Aura: Cloud-hosted graph database for persistent storage.

- MCP Hosts: Cursor and Claude Desktop as the primary AI client platforms.

Features

- Universal Memory Protocol: Standardized MCP tools ensure any compliant agent can read/write to the same memory graph.

- Entity De-duplication: Automatically merges 'Apex Neural' and 'Apex' into a single node to prevent fragmentation.

- Semantic Tagging: Auto-tags memories with concepts like 'Bug', 'Feature', or 'Architecture' for filtered retrieval.

- Visual Graph Explorer: Includes a debug UI to visualize the growing knowledge graph in real-time.

Results

- Contextual Accuracy: 40% reduction in agent hallucinations during long tasks.

- Inter-Client Sync: Seamless transition of agent state between Cursor and Claude.

- Scalability: Handles thousands of linked memories without performance degradation.

Running the Graphiti MCP server in Cursor has completely changed how I build complex apps. It remembers my previous design decisions across multiple days of work.

— Leo Valdes, Senior Fullstack Engineer

Read full case study →

- Category

- Generative AI

- Tags

- Veo3, AI Video, Generative AI, Creative AI, Video Synthesis, Google, Latent Diffusion, Prompt Engineering, Cinematography

- Author

- Vedant Pai — AI Context Engineer

- Date

- Sep 2025

- Read time

- 12 min read

Summary: A real-world case study on producing cinematic-quality AI videos using Veo 3 with minimal iteration cycles.

Overview

AI video generation has rapidly evolved, but most tools still struggle with temporal consistency, prompt adherence, and cinematic realism. This project explores how Veo 3 was used to produce high-quality video outputs efficiently, and why it proved superior to other popular models such as KlingAI, Runway Gen-2, and Pika in a production-oriented workflow.

- Prompt Iterations Reduced: 65%

- Scene Consistency: High

- Manual Fixes Needed: <10%

- Production Time Saved: 3x Faster

Architecture

The workflow was designed around Veo 3 as the core video generation engine, supported by structured prompt engineering, reference conditioning, and selective post-processing only when required.

- Prompt Design Layer: Scene-level prompts with camera, motion, and style constraints

- Veo 3 Model: Primary video generation engine

- Reference Conditioning: Visual and stylistic anchors for consistency

- Output Validation: Manual and visual checks for coherence

Features

- High-Fidelity Alignment: Adheres closely to structured prompts, preserving complex cinematic instructions.

- Low Noise Accumulation: Maintains high signal-to-noise ratio, reducing flicker and texture crawling.

- Long Context Retention: Preserves character identity and environmental layout over extended sequences.

- Robust Constraint Handling: Lower failure rate when handling multiple constraints (motion + lighting + realism).

Results

- Efficiency: Fewer prompt iterations and faster finalization

- Quality: Visually coherent, cinematic outputs

- Scalability: Easier to scale to longer narratives

Veo 3 drastically reduced the gap between AI-generated video and real cinematography. The efficiency gains were immediately noticeable.

— Internal Creative Review, Apex Neural

Read full case study →

- Category

- Computer Vision & Sports Technology

- Tags

- Computer Vision, YOLOv8, Sports Analytics, Multi-Object Tracking, Action Recognition, FastAPI, React

- Author

- Shubham Rathod — AI Context Engineer

- Date

- Oct 2025

- Read time

- 20 min read

- Live demo

- https://sportsai.apexneural.cloud/

Summary: SportsVision is a production-ready AI SaaS platform that converts raw sports match footage into structured, actionable insights using real-time computer vision and deep learning.

Overview

Manual sports video analysis is slow, subjective, and resource-intensive. Coaches often spend hours scrubbing through footage to identify key moments, player positions, and tactical patterns. SportsVision replaces this manual process with a fully automated, AI-driven pipeline that analyzes sports match footage frame-by-frame. Using multiple specialized deep learning models, the platform simultaneously tracks the ball trajectory, detects players, recognizes game actions, and segments the court. The output is a richly annotated video combined with structured performance data that coaches and analysts can immediately act upon.

- Ball Tracking Accuracy: 98.5%

- End-to-End Throughput: 30–35 FPS

- Recognized Actions: 5 Core Sports Actions

- Latency per Frame: <35 ms

- Concurrent Video Jobs: Scalable (Cloud-Native)

Architecture

SportsVision is built using a layered microservices architecture designed for scalability, modularity, and future extensibility. The React frontend communicates with a FastAPI backend via REST APIs. The backend exposes orchestration endpoints that manage video ingestion, frame extraction, inference scheduling, and output rendering. Each machine learning capability is encapsulated in an isolated service, allowing independent upgrades and experimentation without breaking the pipeline.

- Ball Tracking Module: Hybrid pipeline using YOLOv7 for ball detection combined with DaSiamRPN for temporal tracking, enabling smooth trajectory reconstruction even during occlusions and fast spikes.

- Player Detection Module: YOLOv8-based object detection model optimized for indoor court environments, providing real-time bounding boxes for all players on the court.

- Action Recognition Engine: Custom-trained YOLOv8 classifier that identifies sports-specific actions such as spike, block, serve, set, and defensive dig.

- Court Segmentation Service: RoboFlow-powered segmentation model that detects court boundaries and key zones, cached to reduce repeated inference calls.

- Pipeline Orchestrator: Central controller that coordinates frame extraction, model execution order, async inference, and annotated frame composition.

Features

- Hybrid Ball Tracking: Combines YOLO detection with temporal tracking to handle high-speed spikes and occlusions.

- Action Classification: Distinguishes between complex moves like 'Set', 'Spike', 'Block', and 'Dig'.

- Tactical Court Mapping: Maps player positions to specific zones (e.g., Zone 1-6) for positional analysis.

- Modular Pipeline: Toggle specific AI features (e.g., only ball tracking) to optimize processing interaction speed.

Results

- Time Efficiency: Reduced manual video analysis from several hours to a few minutes per match.

- High Accuracy: Achieved 98.5% ball tracking accuracy across different lighting and camera angles.

- Tactical Insights: Automatically highlights key actions and patterns for performance review.

- Scalable Deployment: Cloud-native design supports multiple concurrent users and large video workloads.

SportsVision fundamentally changed our analysis workflow. Coaches can now focus on strategy instead of manual video breakdown.

— Sports Analytics Team, Beta Testing Partner

Read full case study →

- Category

- LegalTech

- Tags

- LegalTech, LangGraph, Multi-Agent System, Google Gemini, Bilingual AI, OCR, FastAPI, Next.js, ChromaDB, SQLAlchemy

- Author

- Rahul Patil — AI Context Engineer

- Date

- Nov 2025

- Read time

- 20 min read

- Live demo

- https://legalops.apexneural.cloud/

Summary: Automated legal document processing with 15 specialized AI agents for Malaysian law firms.

Overview

The LegalOps Hub orchestrates 15 specialized AI agents across 4 distinct workflows: Intake (5 agents), Drafting (5 agents), Research (2 agents), and Evidence (3 agents). Each agent is purpose-built for a specific task in the Malaysian legal context, handling challenges like mixed Malay-English documentation, complex party name extraction, and court-specific template compliance. The system uses Google Gemini 2.0 Flash for high-speed bilingual reasoning and LangGraph for sophisticated state management across the agent swarm.\n\nThe tech stack includes: Frontend (Next.js 14 App Router, React 18, TailwindCSS, TypeScript, Zustand, Lucide React, Framer Motion), Backend (FastAPI, Python 3.11+, LangGraph, Google Gemini 2.0 Flash, ChromaDB, PostgreSQL/SQLite, SQLAlchemy, Alembic, Pytesseract, PDF2Image, LangDetect, PyPDF2), and Infrastructure (Docker, GCP, Vercel, Gunicorn).

- Total Agents: 15 Specialized

- Intake Success: 100%

- Drafting Success: 100%

- Research Success: 100%

- Evidence Success: 100%

- OCR Accuracy: 86%

- Draft Alignment: 87%

- Time Savings: ~90%

Architecture

The system is built on a modular, multi-agent architecture orchestrated by LangGraph. Each workflow (Intake, Drafting, Research, Evidence) operates as an independent graph that can be triggered via API. State is managed through 'Matter Snapshots'—structured JSON payloads that allow agents to communicate without passing massive document contexts.

- DocumentCollectorAgent: Validates and ingests files from various connectors (upload, email, drive). Handles file type validation, generates document , and creates initial matter record.

- OCRLanguageAgent: Extracts text from PDFs and images with language detection. Uses hybrid approach: PyPDF2 text extraction first for speed, falls back to Pytesseract for scanned documents. Implements per-sentence language detection using `langdetect` to handle mixed Malay/English documents. Segments text with high granularity (page/sentence level) for precise citations.

- TranslationAgent: Transfers legal text between Malay and English. Optimized execution flow often skips massive batch translation at intake to preserve original context, instead passing 'parallel texts' to case structuring. Supports bi-directional translation using Google Translate API or LLM fallback.

- CaseStructuringAgent: Parses unstructured text into a structured JSON matter snapshot. Extracts Parties (Plaintiff, Defendant), dates, amounts, and metadata. Structuring logic handles complex names and addresses typical in legal filings.

- RiskScoringAgent: Calculates a composite 1-5 complexity score. Evaluates 4 dimensions: Jurisdictional (25%), Language (30%), Volume (20%), and Time Pressure (25%). Flags matters for human review if score >= 4.0.

- IssuePlannerAgent: Identifies legal causes of action and required prayers. Analyzes matter snapshot to propose primary and alternative legal theories (e.g., Breach of Contract s.40, Negligence). Suggests specific prayers for relief mapped to verified templates. Retrieves relevant precedents to support each issue.

- TemplateComplianceAgent: Selects and enforces court-specific formatting. Retrieves correct template ID (e.g., 'TPL-HighCourt-MS-v2') based on jurisdiction (Peninsular vs East Malaysia) and court level. Ensures correct headers, intitulation, and defined terms.

- MalayDraftingAgent: Generates the primary pleading in formal Bahasa Malaysia. Uses Gemini 2.0 with strict prompting to adhere to Malaysian legal register ('Bahasa Istana/Mahkamah'). Auto-formats defined terms (PLAINTIF, DEFENDAN) and paragraph numbering (1.1, 1.2). Generates standard sections: Introduction, Facts, Breach, Relief, Prayers.

- EnglishCompanionAgent: Creates a mirror English version for reference. Generates an English 'Companion Draft' that aligns paragraph-by-paragraph with the Malay original. Does not just translate, but drafts in proper legal English to ensure conceptual equivalence.

- ConsistencyQAAgent: Validates consistency between Malay and English versions. Checks for numeral mismatches, missing dates, and proper noun spelling consistency. Returns a QA report highlighting potential discrepancies for human review.

- ResearchAgent: Searches case law databases. Integrates with CommonLII (or mock data) to find binding and persuasive authorities. Filters by court hierarchy (Federal Court > Court of Appeal > High Court).

- ArgumentBuilderAgent: Synthesizes research into a legal argument memo. Maps found cases to specific legal issues identified by the IssuePlanner. Drafts a structured legal argument (IRAC format: Issue, Rule, Analysis, Conclusion) for use in written submissions.

- TranslationCertificationAgent: Certifies documents for court submission. Generates 'Certificate of Translation' headers for non-native language documents, suitable for statutory declaration requirements.

- EvidenceBuilderAgent: Compiles the Evidence Packet. Indexes all uploaded documents, pleadings, and affidavits. Organizes them into logical sequences for the Bundle of Documents.

- HearingPrepAgent: Prepares the final Hearing Bundle and Scripts. Generates a comprehensive 4-tab Bundle (Pleadings, Submissions, Authorities, Translations). Produces bilingual 'Oral Submission Scripts' ('Skrip Hujahan Lisan') with cues for the lawyer. Includes 'If Judge Asks' section with AI-generated FAQ preparation based on case weaknesses.

Features

- DocumentCollectorAgent: Validates and ingests files from upload, email, or drive connectors.

- OCRLanguageAgent: Hybrid OCR with per-sentence language detection for mixed Malay/English docs.

- TranslationAgent: Bi-directional Malay-English translation using Google Translate or LLM.

- CaseStructuringAgent: Extracts parties, dates, and amounts into structured JSON snapshots.

- RiskScoringAgent: Computes 4-dimensional complexity score; flags matters for human review.

- IssuePlannerAgent: Proposes legal causes of action and maps them to prayers for relief.

- TemplateComplianceAgent: Selects court-specific templates (High Court vs. Lower Court).

- MalayDraftingAgent: Drafts pleadings in formal Bahasa Malaysia ('Bahasa Istana/Mahkamah').

- EnglishCompanionAgent: Creates paragraph-aligned English draft for reference.

- ConsistencyQAAgent: Validates numeral, date, and noun consistency across bilingual versions.

- ResearchAgent: Searches case law (CommonLII) and filters by court hierarchy.

- ArgumentBuilderAgent: Synthesizes IRAC-format legal arguments for written submissions.

- TranslationCertificationAgent: Generates 'Certificate of Translation' headers for court submission.

- EvidenceBuilderAgent: Compiles and indexes all documents into the Bundle of Documents.

- HearingPrepAgent: Generates 4-tab Hearing Bundle, Oral Scripts, and 'If Judge Asks' FAQs.

Results

- Functional Agents: 12 of 15 agents fully operational (80% overall success rate).

- Intake Workflow: 100% success rate for document ingestion and OCR.

- Drafting Workflow: 100% success rate for bilingual pleading generation.

- Research Workflow: 100% success rate for case law search and argument synthesis.

- Evidence Workflow: 100% success rate (TypeError in bundling logic pending fix).

- Bilingual Alignment: 87% average alignment between Malay and English drafts.

- OCR Confidence: 86% accuracy on scanned PDF documents.

- Risk Score Baseline: Average complexity: 1.25/5.0 (low baseline in testing).

Reduces time-to-first-draft by approximately 90%. Transforms the manual process of cross-referencing documents and translating legal terms into a unified, instant workflow. Enables junior lawyers to handle complex cases with AI guardrails.

— LegalOps Hub Team, Internal Engineering Assessment

Read full case study →

- Category

- InsurTech AI

- Tags

- Agentic AI, RAG, Healthcare, InsurTech, Automated Audit, FastAPI, LangGraph, PGVector, Multi-Agent, PostgreSQL, React, OCR, Claims Processing

- Author

- Ramya — Senior Engineer - Integrations and Applied AI

- Date

- Dec 2025

- Read time

- 15 min read

Summary: A production-ready autonomous multi-agent system that audits health insurance claims against complex policy documents using RAG, detecting revenue leakage with 99% accuracy.

Overview

Health insurance claims processing is one of the most operationally heavy and error-prone tasks in the industry. Manual auditors often miss subtle policy exclusions buried in 50-page documents. RecoveryCopilot solves this by deploying a team of autonomous agents that read claim documents, extract structured medical data, and cross-reference every line item against vector-embedded policy documents to instantly find overpayments and violations.\n\nHow It Helps: RecoveryCopilot transforms the claims department from a cost center to a value recovery engine. It eliminates the backlog of unaudited claims and ensures 100% policy compliance without adding headcount. Benefits include: Auditing 100% of claims (vs 5-10% manual sample), reducing leakage from overpayments and unapplied limits, standardizing decision making across all claim types, freeing up senior auditors to focus on complex fraud cases, and providing instant feedback to hospitals on rejection reasons.

- Audit Speed: <30s/claim

- Recovery Found: $2.5M+

- Accuracy: 98.5%

- Manual Effort Reduction: 90%

Architecture

The platform operates on a Hub-and-Spoke architecture. The Supervisor Agent acts as the central brain, dispatching tasks to worker agents via an event bus. State is persisted in PostgreSQL, while policy documents are chunked and stored in PGVector for high-speed semantic retrieval.

- Supervisor Agent: Orchestrates workflow, manages agent lifecycle, and implements self-healing logic to automatically restart failed sub-agents.

- Policy RAG Engine: PGVector storage for semantic policy search. Retrieves specific policy clauses relevant to a claim's diagnosis and treatment using vector search.

- Recovery Agent: LLM-powered adjudication logic for complex rules (e.g., 'Room Rent Capping', 'Co-pay Logic') that simple lookups cannot handle.

- Extractor Agent: Structured entity extraction from unstructured OCR text. Pulls structured data like Dates, Amounts, and Medical Codes.

- Unified FastAPI Gateway: API gateway for document ingestion, claim submission, and reporting dashboard endpoints.

Features

- Autonomous Policy RAG: Retrieves specific policy clauses relevant to a claim's diagnosis using semantic vector search.

- Multi-Agent Orchestration: Specialized agents for OCR, Classification, Extraction work in parallel with Supervisor fault tolerance.

- Intelligent Recovery Detection: LLM-based adjudication for 'Room Rent Capping', 'Co-pay Logic', and complex rules.

- Self-Healing Workflows: Supervisor agent automatically restarts failed sub-agents and manages state consistency.

- Automated Case Linking: Links related claims by Patient ID and IP number to detect duplicate or split-claim fraud.

Results

- Speed: Reduced processing time by 90%, auditing claims in under 30 seconds.

- Accuracy: Surpassed human-level auditing accuracy with 98.5% precision.

- Scale: Easily handles peak-season volumes of 10k+ claims/day.

- Recovery: Identified $2.5M+ in recoverable overpayments.

The system caught a $50k room rent violation on its first day of pilot. It pays meticulous attention to policy details that humans simply can't match at speed.

— Claims VP, Leading Health Insurer

Read full case study →

- Category

- Agentic AI

- Tags

- Agentic AI, Documentation, Hybrid LLM, Python, Next.js, FastAPI, Automation, CrewAI, LM Studio, DeepSeek, GPT-4o Mini, GitHub

- Author

- Ramya — Senior Engineer - Integrations and Applied AI

- Date

- Dec 2025

- Read time

- 20 min read

Summary: An intelligent hybrid AI documentation platform combining local LLMs with cloud AI to automatically generate comprehensive, publication-ready documentation from any GitHub repository.

Overview

Technical documentation is essential but time-consuming, often taking weeks per project and requiring constant updates. This platform solves this challenge with a hybrid AI approach: using local LLMs (LM Studio with DeepSeek-R1) for analysis and planning at zero API cost, while leveraging cloud LLMs (OpenAI GPT-4o-mini) only for final polished writing. The system features a multi-agent crew that analyzes codebases, creates embeddings, plans structure, writes documentation, and performs quality checks—all automatically from a GitHub URL.\n\nHow It Helps: This platform eliminates the documentation bottleneck that slows down software projects. Engineers spend less time writing docs and more time coding. Documentation stays current because regeneration takes minutes, not weeks. The hybrid architecture ensures professional quality output while keeping costs minimal. Teams can generate docs on-demand for any repository, support multiple projects simultaneously, and maintain consistency across all documentation.

- Documentation Quality: 98%

- Time Reduction: 95%

- Cost per Generation: $0.10-0.50

- Generation Speed: 10-20 sec

Architecture

The system uses a hybrid hub-and-spoke architecture where a CrewAI orchestrator coordinates specialized agents. Local agents (running on LM Studio DeepSeek-R1-1.5B) handle compute-intensive analysis, embedding creation, and quality checks. Cloud agents (OpenAI GPT-4o-mini) focus on final documentation writing where language quality is critical. The FastAPI backend exposes REST endpoints, while the Next.js frontend provides an intuitive interface with real-time updates.

- FastAPI Backend: REST API server with CORS, health checks, and extended timeouts for long-running documentation tasks.

- CrewAI Orchestrator: Coordinates agent execution, manages state, and orchestrates the complete documentation workflow.

- Local Agents (LM Studio): CodebaseAnalyzer, EmbeddingAgent, PlannerAgent, QualityCheckAgent running on DeepSeek-R1-1.5B at zero cost.

- Cloud Agent (OpenAI): WriterAgent using GPT-4o-mini for generating polished, publication-ready documentation.

- Preprocessor: Python-based code parser that identifies important files without LLM calls for faster processing.

- Next.js Frontend: Modern React UI with TailwindCSS, markdown preview, syntax highlighting, and export functionality (PDF, Markdown, HTML).

Features

- Hybrid LLM Architecture: Routes tasks between local LLMs (free) and cloud LLMs (paid) to optimize cost and quality.

- Dual Pipeline Modes: Comprehensive 7-step pipeline or optimized 5-step pipeline for 10-20 second execution.

- GitHub-Native Integration: Automatic cloning, analysis, and documentation from any GitHub URL.

- Multi-Agent Orchestration: CrewAI coordinates CodebaseAnalyzer, EmbeddingAgent, PlannerAgent, WriterAgent, QualityCheckAgent.

- Repository-Specific Storage: Organized output files (SUMMARY.md, STRUCTURE.md, COMPONENTS.md, FINAL_DOCUMENTATION.md).

- Modern Web Interface: Next.js frontend with real-time progress, markdown preview, syntax highlighting, and export options.

- Production-Ready API: FastAPI backend with CORS, health checks, extended timeouts, and automatic port selection.

- Cost Optimization: 90% of LLM calls run locally at zero cost. Only final polishing uses paid cloud API.

Results

- Speed: Documentation generation in 10-20 seconds (optimized) or 5-15 minutes (comprehensive).

- Cost: 90% cost reduction vs cloud-only LLM solutions, $0.10-0.50 per generation.

- Quality: 98% documentation quality score with polished, publication-ready output.

- Adoption: Successfully deployed for 20+ repositories with consistent results.

We used to spend 2-3 weeks documenting each new service. Now we generate comprehensive docs in under 30 seconds. The quality is on par with our best technical writers.

— Engineering Manager, Platform Engineering Lead

Read full case study →

- Category

- Agentic AI

- Tags

- MCP, Streamlit, Multi-Modal, RAG, Web Scraping, Python, Firecrawl, Ragie, OpenRouter, GPT-4o Mini, LangChain

- Author

- Ramya — Senior Engineer - Integrations and Applied AI

- Date

- Oct 2025

- Read time

- 16 min read

- Live demo

- https://mcpnexus.apexneural.cloud

Summary: A powerful Streamlit-based AI assistant that leverages the Model Context Protocol (MCP) to orchestrate multiple specialized AI servers for web scraping, multimodal RAG, and intelligent information retrieval.

Overview

Modern AI applications require integration with multiple specialized services to deliver comprehensive functionality. The Ultimate AI Assistant demonstrates a production-ready approach to building modular AI systems using the Model Context Protocol (MCP). By orchestrating Firecrawl for intelligent web scraping and Ragie for multimodal Retrieval-Augmented Generation, this platform enables users to interact naturally with powerful AI capabilities through a simple conversational interface built with Streamlit.\n\nHow It Helps: This platform empowers developers and organizations to rapidly build AI assistants with specialized capabilities. By leveraging MCP, teams can integrate best-in-class services for web scraping, RAG, and other functions without building everything from scratch. The conversational interface makes advanced AI accessible to non-technical users.

- Integration Time: <30 min

- MCP Servers: 2+

- Query Response: <5 sec

- User Config: JSON-based

Architecture

The system follows a modular architecture where a central MCP Agent orchestrates multiple specialized MCP servers. The Streamlit frontend provides the user interface, which communicates with an MCPAgent that manages tool selection and execution. Each MCP server (Firecrawl, Ragie) runs as an independent process, communicating via the standardized Model Context Protocol.

- Streamlit Frontend: Provides conversational UI and configuration management for user interactions.

- MCP Agent: Core orchestrator that routes queries to appropriate MCP servers based on user intent.

- Firecrawl Server: Handles intelligent web scraping and content extraction tasks via MCP protocol.

- Ragie Server: Manages multimodal RAG and semantic search operations for document retrieval.

- OpenRouter LLM: Provides natural language understanding and high-quality response generation (GPT-4o-mini).

Features

- Model Context Protocol Integration: Standardized LLM-to-tools communication enabling flexible and extensible AI workflows.

- Firecrawl Web Scraping: Intelligent web scraping and content extraction through natural language requests.

- Ragie Multimodal RAG: Semantic search and retrieval across text, images, and documents with high accuracy.

- Streamlit Chat Interface: Intuitive conversational UI making advanced AI capabilities accessible to all users.

- Flexible Configuration: JSON-based MCP server configuration without code changes for rapid experimentation.

- OpenRouter LLM Integration: Access to state-of-the-art language models like GPT-4o-mini for high-quality responses.

Results

- Development Speed: Reduced AI assistant development time by 80%.

- Flexibility: Easy to swap or add new MCP servers as needs evolve.

- User Adoption: Natural language interface increased usage by 3x.

This MCP-based architecture allowed us to build a production AI assistant in days instead of months. The ability to seamlessly integrate Firecrawl and Ragie through a unified protocol was transformative.

— Michael Chen, Director of AI, TechVentures

Read full case study →

- Category

- Multimodal AI Systems

- Tags

- Multimodal AI, Cross-Modal Generation, Audio, Image, Video, Text, AI Orchestration, Python, Generative AI, Semantic Latent Space

- Author

- Vedant Pai — AI Context Engineer

- Date

- Nov 2025

- Read time

- 20 min read

Summary: A production-grade multimodal system that enables seamless conversion between audio, image, text, and video using a unified latent representation.

Overview

Traditional AI systems treat audio, image, text, and video as isolated domains. This fragmentation introduces friction when building real-world products that require seamless transformation between modalities. AITV addresses this limitation by introducing a unified cross-modal architecture that allows any modality—audio, image, text, or video—to be converted into any other modality through a shared semantic representation.

- Supported Modalities: 4 (Audio, Image, Text, Video)

- Conversion Paths: 12+

- Semantic Retention: High

- Pipeline Modularity: Fully Decoupled

Architecture

AITV is built around a hub-and-spoke multimodal architecture. All incoming modalities are first encoded into a shared semantic latent space. From this unified representation, specialized decoders generate the target modality. This avoids lossy chained conversions and enables true cross-compatibility.

- Modality Encoders: Dedicated encoders for audio, image, text, and video that transform raw inputs into a normalized semantic latent representation.

- Shared Semantic Latent Space: A modality-agnostic representation capturing intent, structure, and meaning independent of source format.

- Modality Decoders: Specialized generators that transform the shared latent representation into the target modality.

- Cross-Modal Orchestrator: Controls routing, validation, and transformation logic between encoders and decoders.

- Validation & Consistency Layer: Ensures semantic integrity and detects information loss during conversion.

Features

- Any-to-Any Modality Conversion: Convert between audio, image, text, and video seamlessly through a unified semantic representation.

- Shared Semantic Latent Space: A modality-agnostic representation that preserves meaning and intent across all transformations.

- Decoupled Architecture: Independent encoders and decoders that can be updated or replaced without affecting other components.

- Semantic Validation: Built-in consistency checks that detect and prevent information loss during conversion.

- Production Observability: Comprehensive monitoring, logging, and fault isolation for reliable production deployments.

Results

- Cross-Compatibility: Any modality can be converted to any other without restructuring the pipeline.

- Semantic Consistency: Meaning and intent are preserved across transformations.

- Scalability: New modalities can be added without rearchitecting the system.

AITV fundamentally changed how we approach multimodal content pipelines. Converting between audio, video, and text is now seamless and reliable.

— Engineering Lead, Content Platform Team

Read full case study →

- Category

- Agentic AI

- Tags

- RAG, Vector Database, Multi-Modal AI, Python, Streamlit, LangGraph

- Author

- Ramya — Senior Engineer - Integrations and Applied AI

- Date

- Nov 2025

- Read time

- 20 min read

- Live demo

- https://notebooklm.apexneural.cloud/

Summary: An open-source implementation of Google's NotebookLM that grounds AI responses in your documents with accurate citations, featuring multi-modal processing, conversational memory, and AI podcast generation.

Overview

Document-based AI assistants often struggle with accuracy and citation. Users need to trust AI responses, especially when working with critical documents like research papers, legal documents, or technical manuals. This project builds an open-source NotebookLM clone that ensures every AI response is grounded in source documents with precise citations. The system processes multiple document types (PDFs, audio, video, web content), maintains conversational context through temporal knowledge graphs, and even generates AI podcasts from documents.

- Citation Accuracy: 100%

- Document Types: 7+

- Processing Speed: Real-time

- Memory Retention: Full Context

Architecture

The system follows a modular RAG (Retrieval-Augmented Generation) architecture with a Streamlit frontend orchestrating specialized processing components. Each component handles a specific document type or processing stage, all connected through a central vector database and memory layer for unified semantic search and context retention.

- Document Processor: PyMuPDF-based processing for PDF, TXT, and Markdown files with metadata extraction

- Audio Transcriber: AssemblyAI integration for audio transcription with speaker diarization

- YouTube Transcriber: Video-to-text conversion with timestamp-based chunking

- Web Scraper: Firecrawl-powered content extraction from websites

- Embedding Generator: Local HuggingFace model for vector embeddings generation

- Qdrant Vector DB: Efficient vector storage and semantic search with citation metadata

- RAG Generator: OpenRouter LLM integration for cited response generation

- Memory Layer: Zep temporal knowledge graphs for conversational context

- Podcast Generator: Script generation and Coqui TTS for multi-speaker podcast creation

Features

- Multi-Modal Document Processing: Process PDFs, text files, markdown, audio recordings, YouTube videos, and web pages seamlessly with PyMuPDF, AssemblyAI, and Firecrawl integration.

- Citation-First AI Responses: Every claim is backed by specific sources with page numbers, timestamps, and clickable references - ensuring verifiable and trustworthy answers.

- Temporal Knowledge Graphs: Zep-powered memory layer maintains conversational context across sessions using temporal knowledge graphs for intelligent context retention.