ComfyUI: Modular Image Generation Architecture

Moving beyond basic web UIs to 'Node-Based' generative pipelines. How ComfyUI enables granular control over every step of the diffusion process.

Project Overview

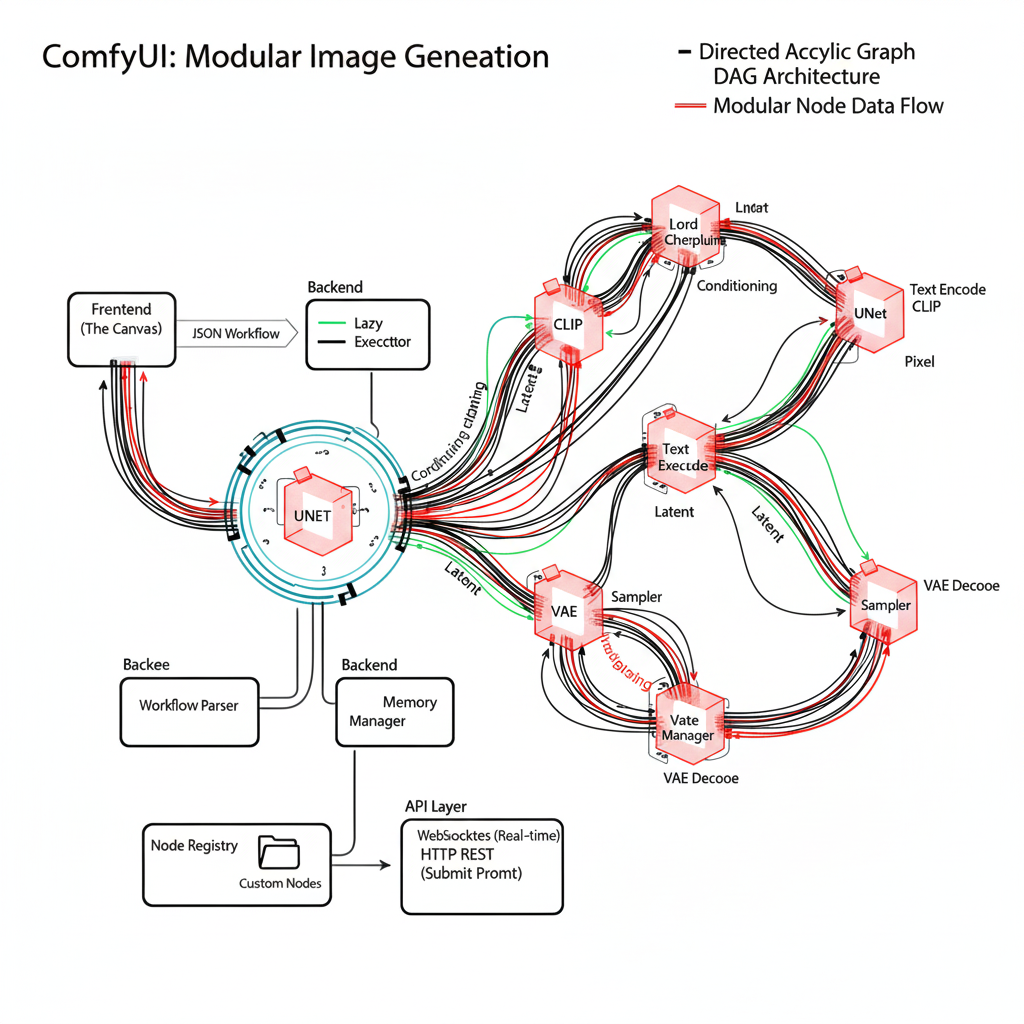

Standard interfaces like Automatic1111 mask the complexity of diffusion models. ComfyUI exposes the internal wiring. By treating the latent space, VAE, CLIP, and Sampler as separate 'nodes', we can build complex workflows—like 'Hires Fix', 'Inpainting', and 'ControlNet Stacking'—that simple UIs cannot handle. It is the professional's choice for reproducibility.

System Architecture

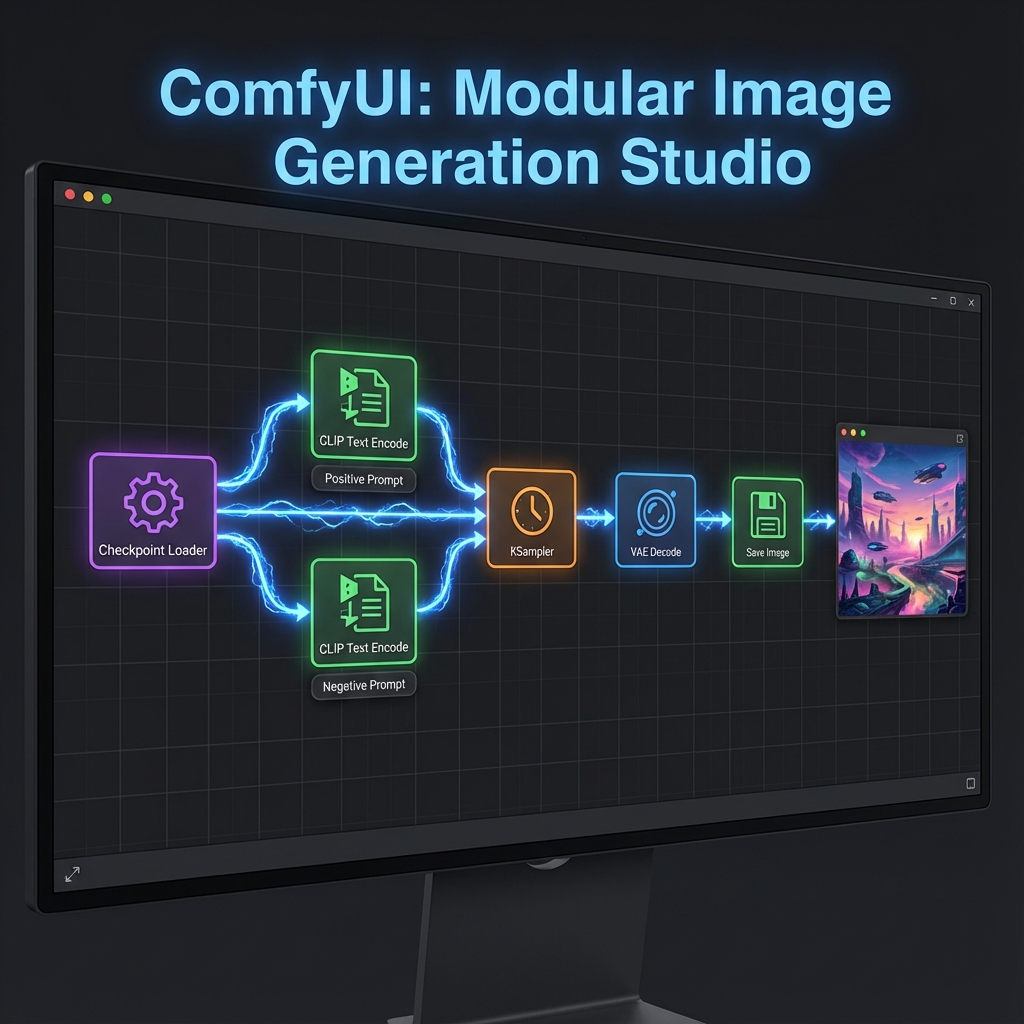

ComfyUI operates on a graph execution model. Data flows from left to right: Checkpoint Loader -> CLIP Text Encode -> KSampler -> VAE Decode -> Save Image. Because it caches intermediate results (like model loading), tweaking a prompt at the end of a chain doesn't require reloading the 6GB checkpoint, making iteration incredibly fast.

Checkpoint Loader

Loads the Safetensors model into VRAM.

KSampler

The core engine performing the denoising steps.

ControlNet Stack

Injecting structural guidance (pose, edges) into generation.

Latent Upscaler

Upscaling images in latent space for sharpness.

Implementation Details

Code Example

// Workflow JSON snippet\n{\n "3": {\n "inputs": {\n "seed": 12345,\n "steps": 20,\n "cfg": 8,\n "sampler_name": "euler",\n "scheduler": "normal",\n "denoise": 1,\n "model": ["4", 0],\n "positive": ["6", 0],\n "negative": ["7", 0],\n "latent_image": ["5", 0]\n },\n "class_type": "KSampler"\n }\n}Agent Memory

Use the 'Efficiency Nodes' pack to create XY plots directly in Comfy. You can iterate over CFG Scale vs Steps to find the sweet spot for a specific model without generating images one by one.

Workflow

Process initiated

Analysis performed

Results delivered

Results & Impact

"ComfyUI saved our production pipeline. The ability to save a graph as a JSON file meant we could version control our image generation logic."

Speed

Optimized VRAM usage allows generation on lower-end GPUs.

Reproducibility

Exact node settings ensure consistent output.

Modular

Easily swap out components (e.g., change VAE) without breaking flow.

About the Author

Parmeet Singh Talwar

AI Context Engineer

Apex Neural

Parmeet engineers context-driven AI that combines LLMs with structured backend architecture and multi-platform integrations. He builds AI-powered systems with secure OAuth, fine-tunes open-source LLMs, and integrates image and video generation into production pipelines. Focused on clean design and system reliability.

Contributors

Ready to Build Your AI Solution?

Get a free consultation and see how we can help transform your business.