Graphiti MCP: Persistent Memory for AI Agents

An advanced Model Context Protocol (MCP) server leveraging Zep's Graphiti to provide persistent, graph-based memory and context continuity across multiple AI agents and platforms like Cursor and Claude.

Project Overview

AI agents today often suffer from 'session amnesia,' where valuable context and past interactions are lost between sessions. By implementing an MCP server that integrates with Zep's Graphiti and Neo4j, we built a memory layer that allows agents in Cursor and Claude to store, retrieve, and link information dynamically. This ensures that the agent's knowledge grows over time, leading to more accurate and personalized responses.

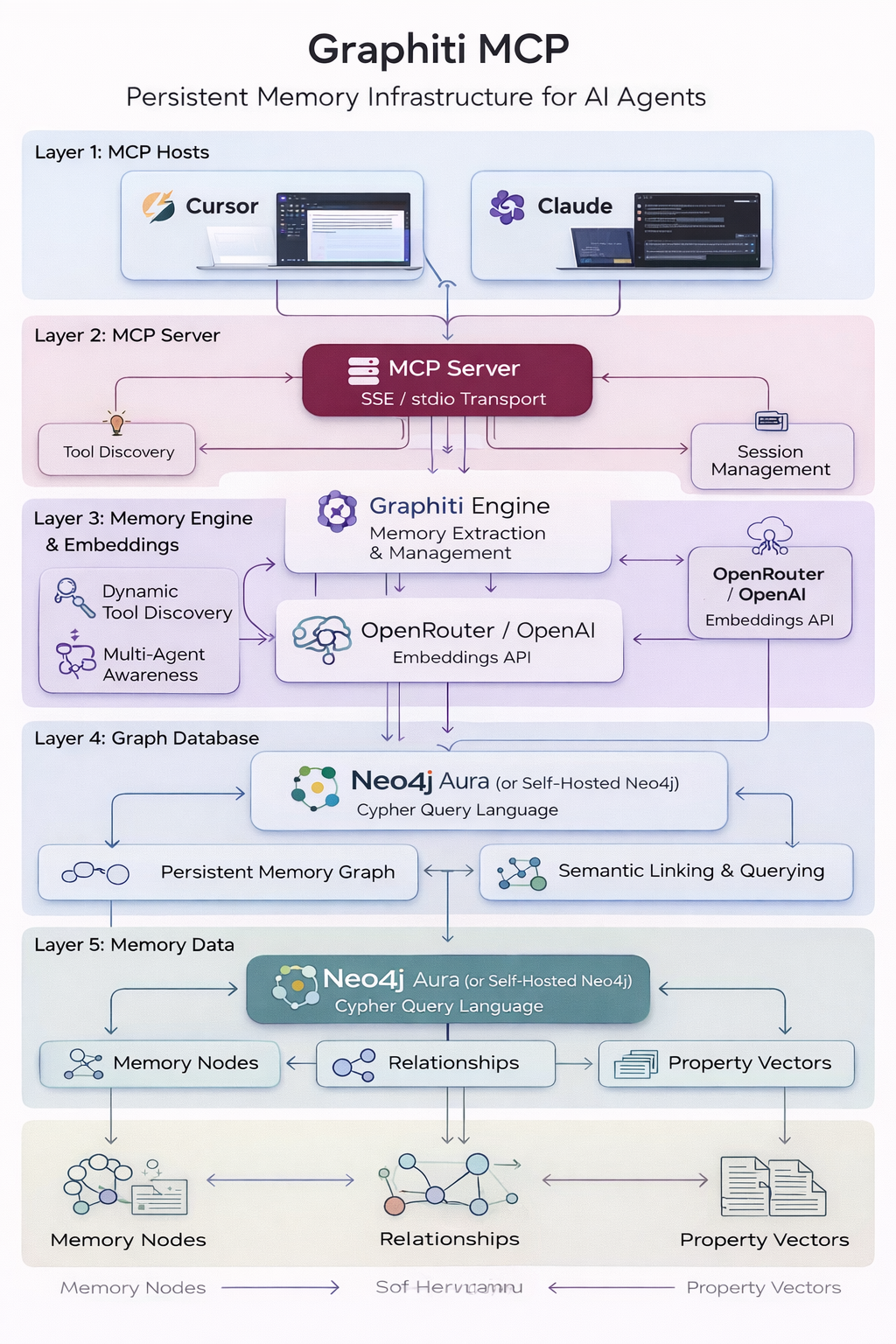

System Architecture

The architecture centers around the MCP Server acting as a bridge between AI hosts (Cursor/Claude) and a Neo4j Graph Database. Graphiti manages the extraction and persistence of memories, while OpenRouter/OpenAI handles embeddings. The server supports both SSE and stdio transports for maximum compatibility.

MCP Server

Handles tool discovery and communication via SSE/stdio.

Graphiti Engine

Logic layer for memory extraction and graph management.

Neo4j Aura

Cloud-hosted graph database for persistent storage.

MCP Hosts

Cursor and Claude Desktop as the primary AI client platforms.

Implementation Details

Code Example

@mcp.tool()\nasync def store_memory(content: str, tags: list[str] = None):\n # Extracts and stores key entities and relationships\n memory = await graphiti.add_memory(content, tags=tags)\n return f'Memory stored successfully in graph: {memory.id}'\n\n@mcp.tool()\nasync def retrieve_memories(query: str):\n # Performs semantic search across the knowledge graph\n results = await graphiti.search(query)\n return resultsAgent Memory

Using a graph-based approach instead of simple vector retrieval allows the agent to understand recursive relationships, identifying not just *what* was said, but *how* concepts are connected.

Workflow

Memory Ingestion: AI agent stores snippets via the 'store_memory' tool.\n2. Knowledge Extraction: Graphiti identifies entities and creates nodes in Neo4j.\n3. Relationship Mapping: The system automatically links related memories.\n4. Contextual Query: Upon a new user query, the agent retrieves the relevant subgraph to maintain session continuity.

Results & Impact

"Running the Graphiti MCP server in Cursor has completely changed how I build complex apps. It remembers my previous design decisions across multiple days of work."

Contextual Accuracy

40% reduction in agent hallucinations during long tasks.

Inter-Client Sync

Seamless transition of agent state between Cursor and Claude.

Scalability

Handles thousands of linked memories without performance degradation.

About the Author

Ready to Build Your AI Solution?

Get a free consultation and see how we can help transform your business.