Ultimate AI Assistant Using Model Context Protocol

A powerful Streamlit-based AI assistant that leverages the Model Context Protocol (MCP) to orchestrate multiple specialized AI servers for web scraping, multimodal RAG, and intelligent information retrieval.

Project Overview

Modern AI applications require integration with multiple specialized services to deliver comprehensive functionality. The Ultimate AI Assistant demonstrates a production-ready approach to building modular AI systems using the Model Context Protocol (MCP). By orchestrating Firecrawl for intelligent web scraping and Ragie for multimodal Retrieval-Augmented Generation, this platform enables users to interact naturally with powerful AI capabilities through a simple conversational interface built with Streamlit.\n\nHow It Helps: This platform empowers developers and organizations to rapidly build AI assistants with specialized capabilities. By leveraging MCP, teams can integrate best-in-class services for web scraping, RAG, and other functions without building everything from scratch. The conversational interface makes advanced AI accessible to non-technical users.

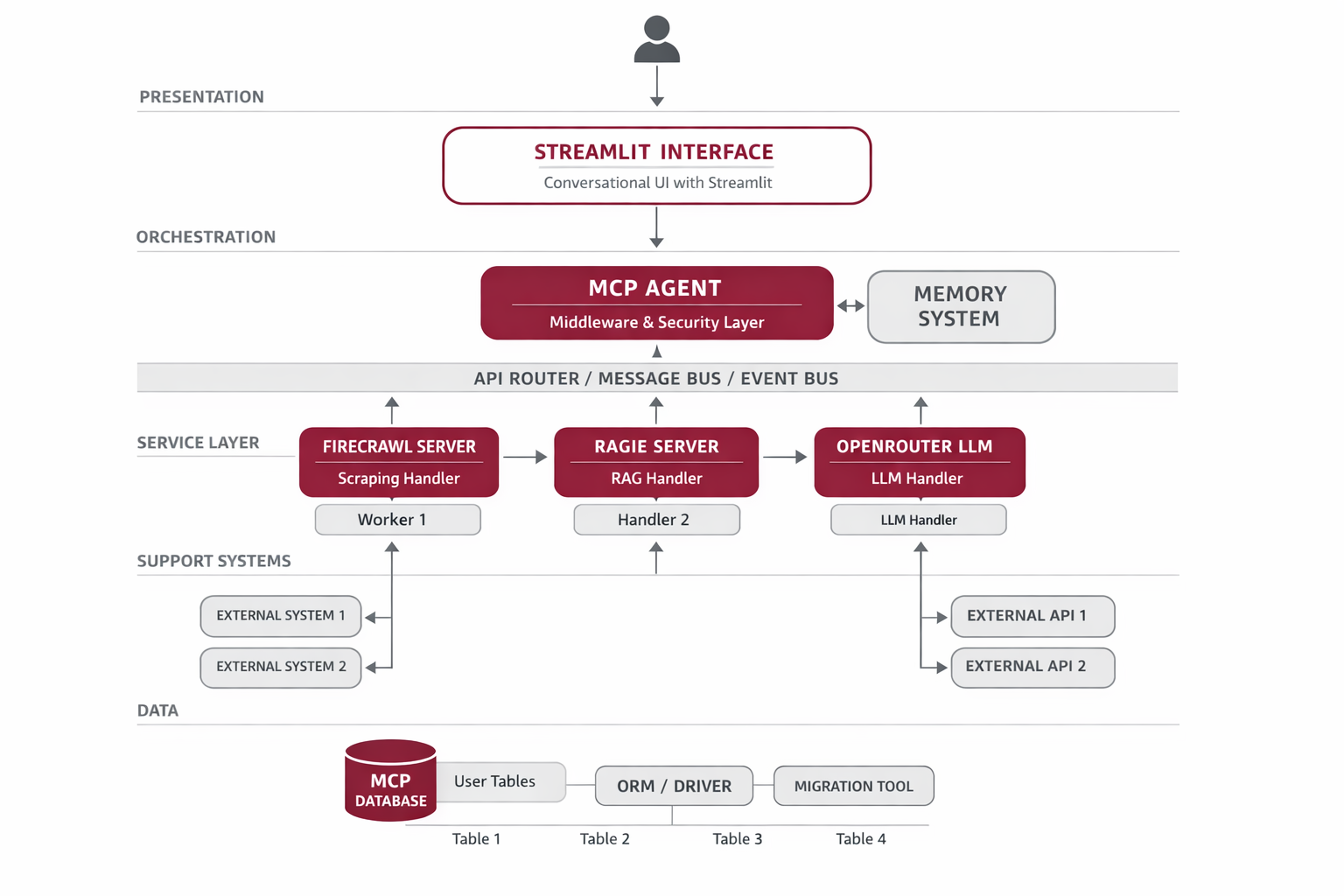

System Architecture

The system follows a modular architecture where a central MCP Agent orchestrates multiple specialized MCP servers. The Streamlit frontend provides the user interface, which communicates with an MCPAgent that manages tool selection and execution. Each MCP server (Firecrawl, Ragie) runs as an independent process, communicating via the standardized Model Context Protocol.

Streamlit Frontend

Provides conversational UI and configuration management for user interactions.

MCP Agent

Core orchestrator that routes queries to appropriate MCP servers based on user intent.

Firecrawl Server

Handles intelligent web scraping and content extraction tasks via MCP protocol.

Ragie Server

Manages multimodal RAG and semantic search operations for document retrieval.

OpenRouter LLM

Provides natural language understanding and high-quality response generation (GPT-4o-mini).

Implementation Details

Code Example

from mcp_use import MCPAgent, MCPClient\nfrom langchain_openai import ChatOpenAI\n\n# Create MCP client from configuration\nconfig = {\n 'mcpServers': {\n 'firecrawl': {\n 'command': 'npx',\n 'args': ['-y', 'firecrawl-mcp'],\n 'env': {'FIRECRAWL_API_KEY': api_key}\n },\n 'ragie': {\n 'command': 'npx',\n 'args': ['-y', '@ragieai/mcp-server', '--partition', 'default'],\n 'env': {'RAGIE_API_KEY': ragie_key}\n }\n }\n}\n\nclient = MCPClient.from_dict(config)\nllm = ChatOpenAI(model='openai/gpt-4o-mini', api_key=openrouter_key)\nagent = MCPAgent(llm=llm, client=client, max_steps=100)\n\n# Execute query\nresult = await agent.run('Search my documents for AI research papers')Agent Memory

The Model Context Protocol standardizes how LLMs interact with external tools, making it easy to add new capabilities without changing the core agent logic. This modularity accelerates development and enables rapid prototyping of AI-powered solutions.

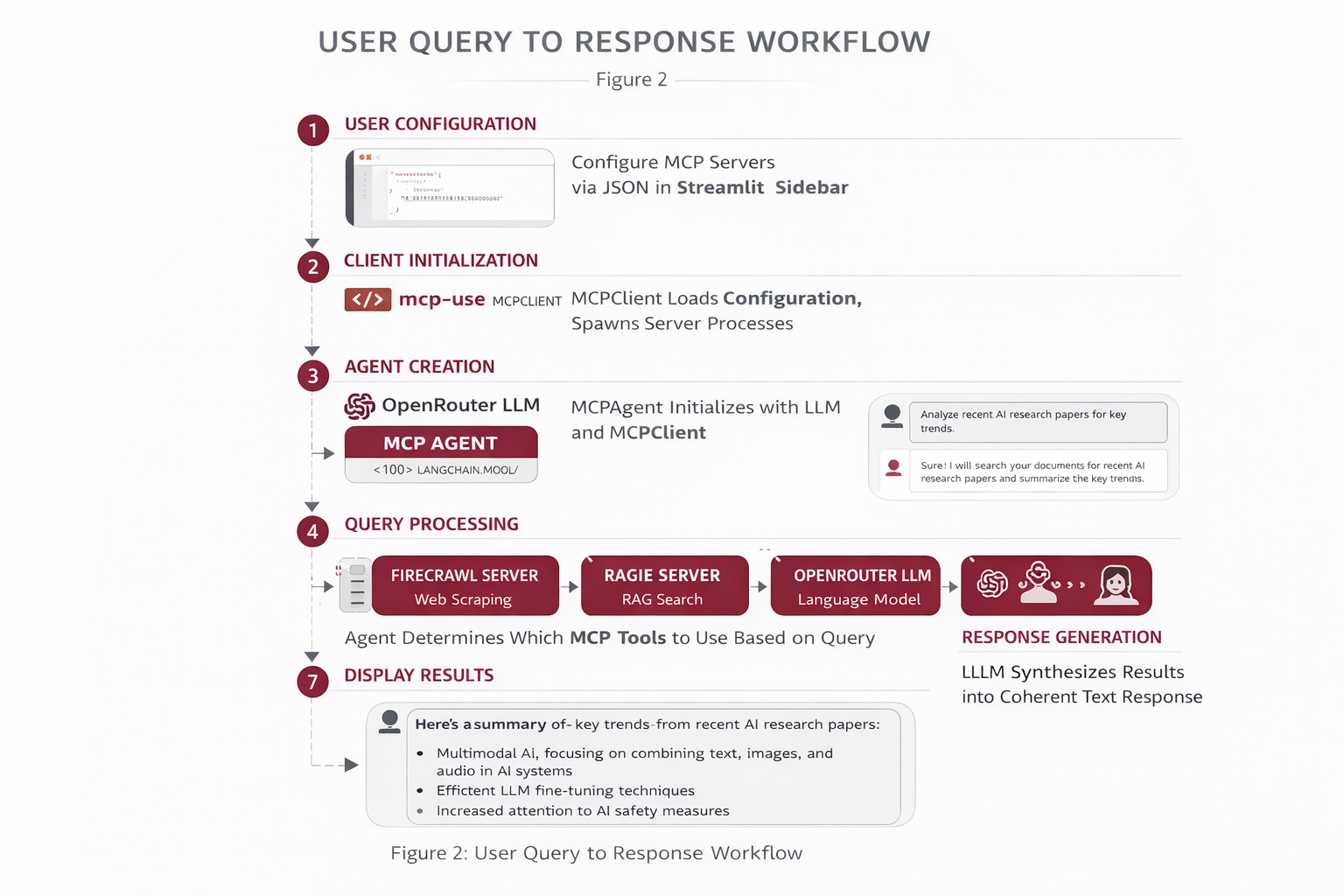

Workflow

Step 1 - User Configuration: Users provide MCP server configurations via JSON in the Streamlit sidebar.\n\nStep 2 - Client Initialization: MCPClient loads and validates the configuration, spawning MCP server processes.\n\nStep 3 - Agent Creation: MCPAgent is initialized with the LLM and MCP client.\n\nStep 4 - Query Processing: User queries are sent to the agent, which determines which MCP tools to use.\n\nStep 5 - Tool Execution: The agent calls appropriate MCP servers (Firecrawl for web scraping, Ragie for RAG).\n\nStep 6 - Response Generation: Results from MCP tools are synthesized by the LLM into a coherent response.\n\nStep 7 - Display Results: The final answer is displayed in the Streamlit chat interface.

Results & Impact

"This MCP-based architecture allowed us to build a production AI assistant in days instead of months. The ability to seamlessly integrate Firecrawl and Ragie through a unified protocol was transformative."

Development Speed

Reduced AI assistant development time by 80%.

Flexibility

Easy to swap or add new MCP servers as needs evolve.

User Adoption

Natural language interface increased usage by 3x.

About the Author

Ramya

Senior Engineer - Integrations and Applied AI

Apex Neural

12+ years building scalable AI-driven and web applications across healthcare, fintech, and enterprise. Deep expertise in multi-agent systems, LLM workflows, RAG pipelines, API orchestration, payment integrations, and document intelligence (OCR and structured extraction).

Ready to Build Your AI Solution?

Get a free consultation and see how we can help transform your business.