Scalable Node.js Backend for High-Traffic Application

How we architected and built a scalable Node.js backend serving 2M+ daily API requests with 99.99% uptime, featuring horizontal scaling, intelligent caching, background job processing, and comprehensive monitoring for an enterprise SaaS platform.

Project Overview

Our enterprise SaaS platform required a robust backend capable of handling millions of API requests daily while maintaining sub-100ms response times. The previous PHP-based system couldn't handle traffic spikes and frequently experienced timeouts during peak hours. We rebuilt the backend using Node.js with Express, implementing a layered architecture with clear separation between controllers, services, and data access layers. The solution features connection pooling for PostgreSQL, Redis for caching and session management, Bull queues for background job processing, and PM2 cluster mode for utilizing all CPU cores. Docker containerization enables horizontal scaling across multiple instances behind a load balancer.

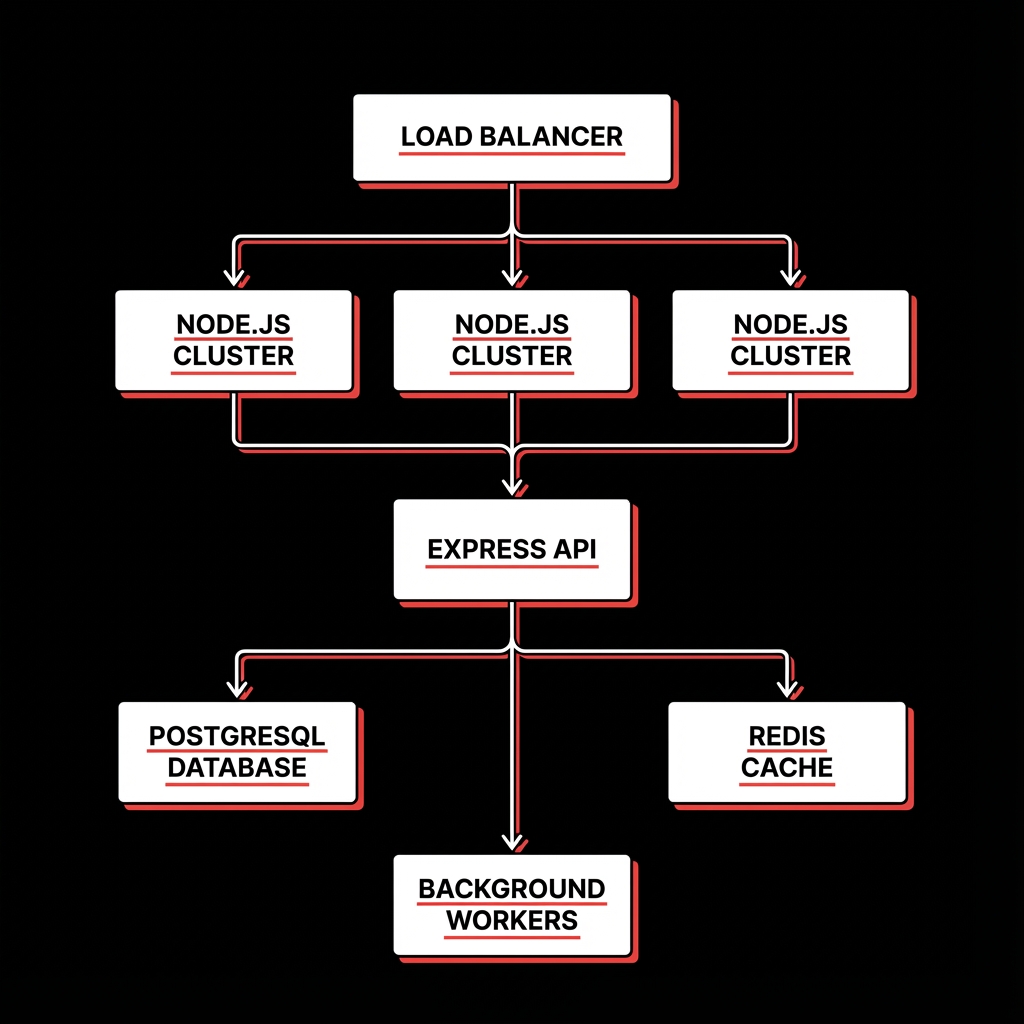

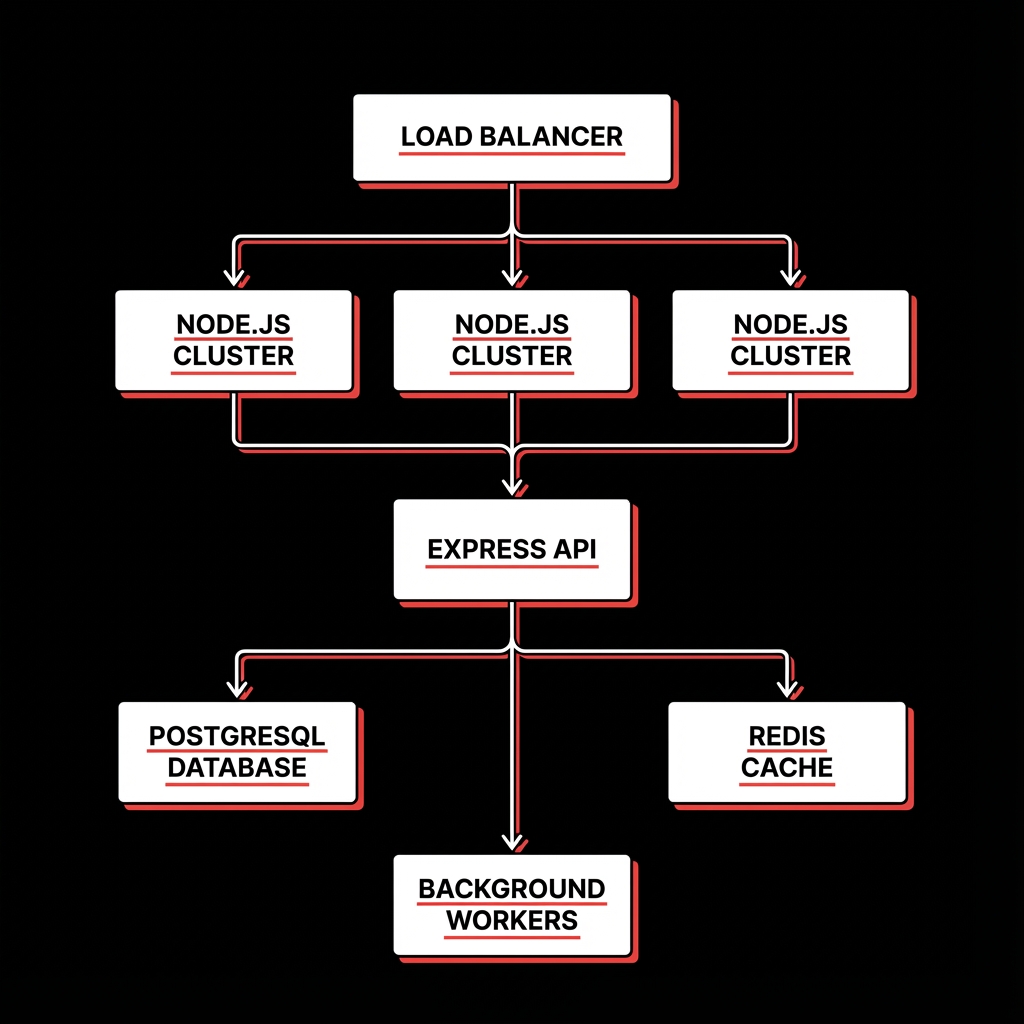

System Architecture

The backend follows a layered architecture with Express handling HTTP requests, routing them through middleware for authentication and validation, to controllers that orchestrate business logic in service classes, which interact with the data layer through repositories. PostgreSQL serves as the primary database with connection pooling via pg-pool. Redis provides caching for frequently accessed data and session storage. Bull queues handle background jobs like email sending, report generation, and data processing. PM2 manages the Node.js cluster with automatic restarts and load balancing across CPU cores. The entire stack is containerized with Docker and orchestrated with Docker Compose for local development and Kubernetes for production.

Express.js

Fast, unopinionated web framework with middleware pipeline for authentication, validation, and error handling

PostgreSQL

Primary database with connection pooling, prepared statements, and transaction support for data integrity

Redis

In-memory caching with 85% hit rate, session storage, and pub/sub for real-time features

Bull Queues

Background job processing for async operations with retry logic, job scheduling, and monitoring dashboard

PM2 Cluster

Process manager with cluster mode utilizing all CPU cores, automatic restarts, and zero-downtime reloads

Docker + Kubernetes

Containerization for consistent environments and orchestration for horizontal scaling and self-healing

Implementation Details

Code Example

// Express Application with Layered Architecture

import express from 'express';

import { authMiddleware } from '@/middleware/auth';

import { rateLimiter } from '@/middleware/rateLimit';

import { errorHandler } from '@/middleware/errorHandler';

import { userRoutes } from '@/routes/users';

import { redisClient } from '@/config/redis';

const app = express();

// Middleware pipeline

app.use(express.json({ limit: '10mb' }));

app.use(rateLimiter({ windowMs: 60000, max: 100 }));

app.use(authMiddleware);

// Cache middleware for GET requests

app.use(async (req, res, next) => {

if (req.method === 'GET') {

const cached = await redisClient.get(req.url);

if (cached) return res.json(JSON.parse(cached));

}

next();

});

// Routes

app.use('/api/users', userRoutes);

app.use('/api/products', productRoutes);

// Error handling

app.use(errorHandler);

// Start with PM2 cluster mode

export default app;Agent Memory

Configuring PostgreSQL connection pooling (min: 5, max: 50) eliminated connection exhaustion during traffic spikes. Without pooling, the server crashed at 500 concurrent requests; with pooling, it handles 10,000+.

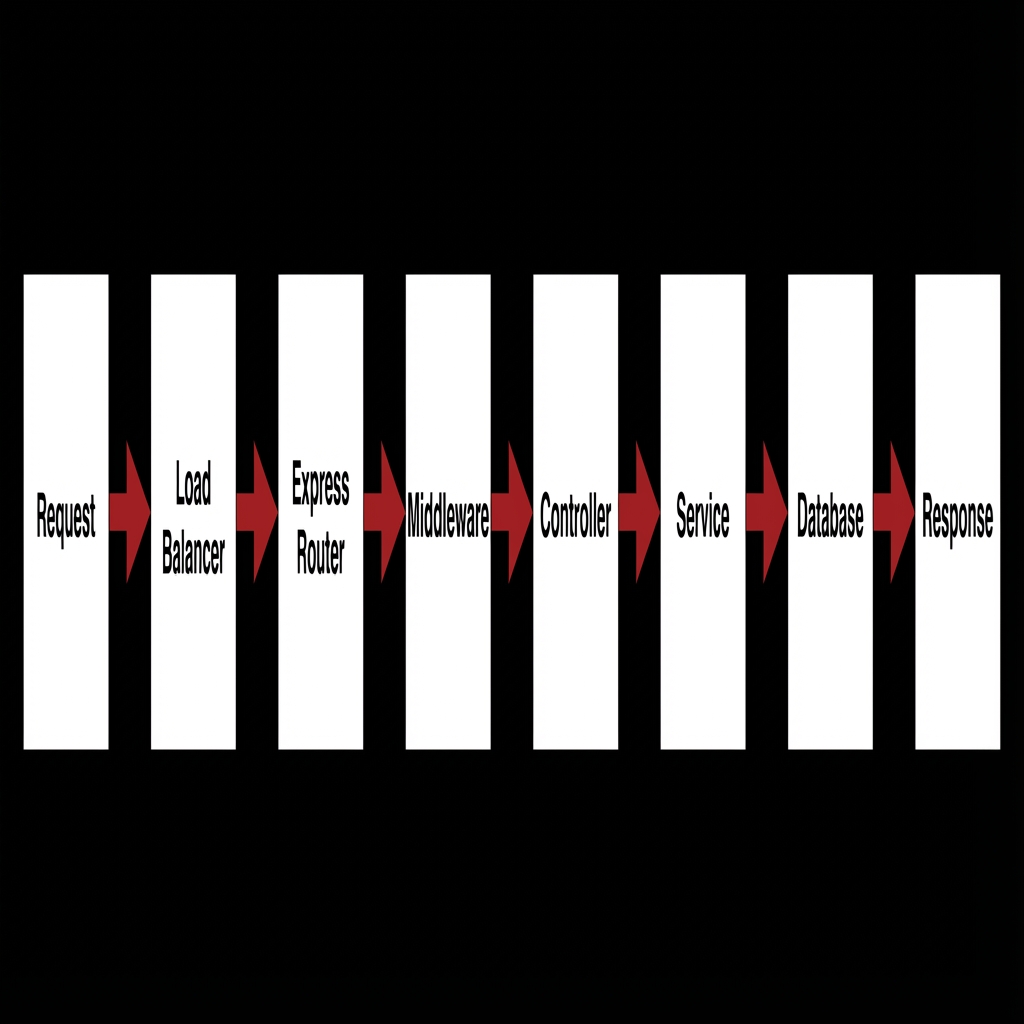

Workflow

Request Received: Client request hits load balancer and routes to available Node.js instance.

Middleware Pipeline: Request passes through rate limiting, authentication, and validation.

Controller Layer: Route handler validates input and calls appropriate service.

Service Layer: Business logic executes, coordinating between multiple data sources.

Cache Check: Redis cache checked before database query; cache miss triggers DB query.

Database Query: PostgreSQL query executed with prepared statements and connection pooling.

Response Caching: Successful responses cached in Redis with appropriate TTL.

Background Jobs: Async operations queued to Bull for processing without blocking response.

Results & Impact

"The Node.js rebuild was transformational. Our old system would crash during sales events; now we handle 10x the traffic without breaking a sweat. The 50ms response times have noticeably improved user experience."

Response Time

Average response time reduced from 800ms to 50ms (94% improvement)

Throughput

From 500 to 10,000+ concurrent connections without degradation

Availability

99.99% uptime achieved through clustering and auto-restart

Cost Efficiency

40% reduction in server costs through efficient resource utilization

About the Author

Sunnykumar Lalwani

Principal Engineer - Backend and Systems Architecture

Apex Neural

12+ years designing high-performance backend and distributed systems. Expertise in scalable system design, DevOps automation, and cloud-native infrastructure. Strong focus on AI-powered applications, LLM integrations, and data-driven workflows. Combines hands-on full-stack work with architectural governance.

Ready to Build Your AI Solution?

Get a free consultation and see how we can help transform your business.