Unify — Omnichannel AI Sales & Support Assistant

How we built Kala — an AI-powered sales assistant that speaks Hinglish, analyses customer photos, and closes saree sales 24/7 across WhatsApp, Instagram, and Telegram.

Project Overview

A leading D2C saree retailer was losing sales every day because customer queries on WhatsApp and Instagram went unanswered after business hours. Agents were overwhelmed managing hundreds of chat threads manually. We built **Unify** — a multi-tenant SaaS platform with an AI persona called **Kala** — that acts like a knowledgeable in-store salesperson: she understands Telugu, Hinglish, and English; she can look at a photo a customer sends and find the matching saree in a 5,000-product catalog; and she hands off to a human agent the moment sentiment dips or a refund is requested.

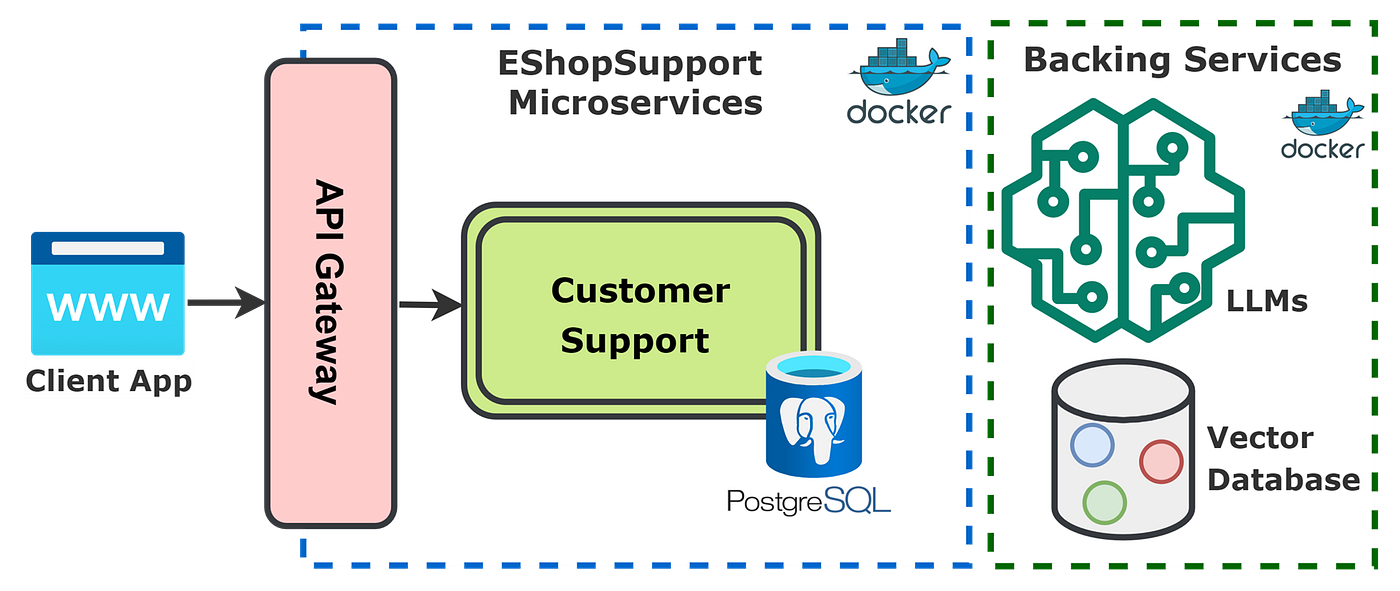

System Architecture

Unify is built around an **Omnichannel Gateway** that normalises payloads from WhatsApp, Instagram, and Telegram into a single internal Message schema. Every inbound message is routed through a JWT-authenticated multi-tenant context resolver that scopes all data and AI config to the correct organisation. The AI pipeline runs a hybrid RAG retrieval (BM25 lexical + sentence-transformer semantic search fused with Reciprocal Rank Fusion) before sending the context to Gemini 2.0 Flash for generation. A real-time WebSocket layer pushes escalations and new messages to the React dashboard instantly.

Omnichannel Gateway

Unified webhook endpoints that normalise WhatsApp, Instagram, and Telegram payloads into a standard internal Message schema, with signature verification and multi-tenant context injection.

Hybrid RAG Engine

BM25 (rank-bm25) lexical retrieval fused with Sentence-Transformer semantic search via Reciprocal Rank Fusion (RRF). NLU entities (color, fabric, SKU, price range) are injected into the search query. A greedy diversity filter ensures results span different colors and fabrics.

LLM Orchestrator

Gemini 2.0 Flash via OpenRouter. Manages system prompt versioning, conversation history compression, language mirroring (Hinglish ↔ Telugu ↔ English), and brand guardrails (2–4 sentence replies, CTA in every message).

Vision Service

Multimodal image analysis: customer photos are base64-encoded and sent to the LLM with a specialised prompt that extracts colour, fabric, and pattern tags, which are then used to query the RAG catalog.

Escalation Engine

Rule-based scoring that triggers human handoff on keyword triggers ("refund", "talk to human"), negative sentiment threshold breaches, or consecutive AI failure turns. Sets human_controlled flag and pushes a WebSocket event to the Agent Inbox.

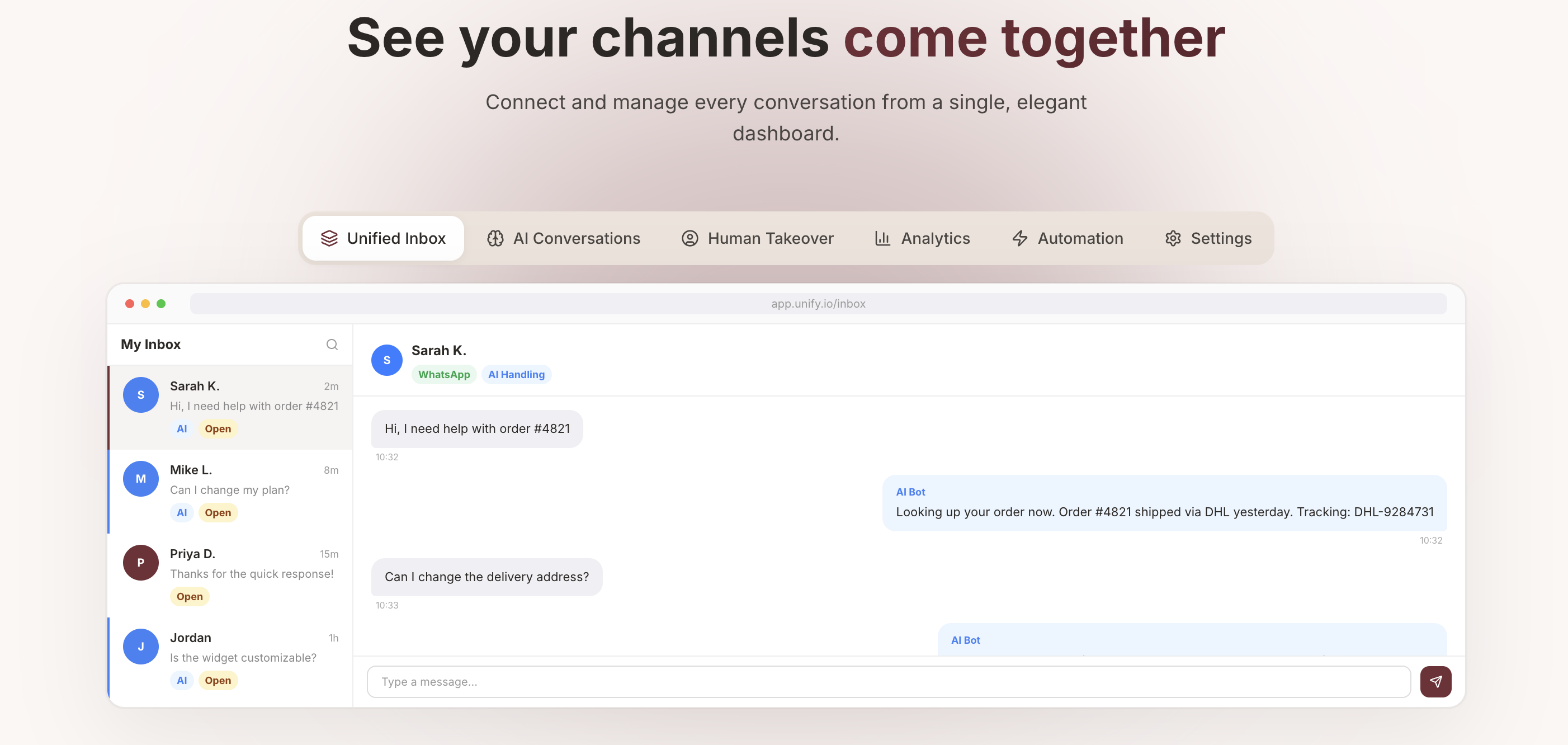

Unified Agent Inbox

React + Vite dashboard with a 3-column inbox (list | thread | context panel). Real-time red badge alerts via WebSocket. Agents can take over, reply via WhatsApp API, attach products, and resolve / hand back to AI.

Implementation Details

Code Example

class RAGService:

"""Hybrid retrieval over products: BM25 + semantic + RRF + diversity filter."""

def retrieve(

self,

query: str,

query_type: str = "descriptive",

entities: Optional[dict] = None,

top_k: Optional[int] = None,

) -> str:

self._build_index()

k = top_k or self.top_k

# Expand query with NLU entities (color, fabric, sku) and synonyms

search_query = _expand_query_with_entities(query, entities)

search_query = _expand_query_synonyms(search_query) or search_query

# Boost lexical for exact SKU; boost semantic for descriptive queries

lexical_k = k * 2 if query_type == "exact" else k

semantic_k = k * 2 if query_type == "descriptive" else k

lex_idx = self._lexical_search(search_query, lexical_k)

sem_idx = self._semantic_search(search_query, semantic_k)

# Reciprocal Rank Fusion: score(d) = Σ 1/(rrf_k + rank_d)

merged = self._merge_and_dedup(lex_idx, sem_idx, k)

# Greedy diversity: prefer products with unseen color/fabric combination

if self.diversity_enabled:

merged = self._apply_diversity(merged, k)

lines = [

_format_product_for_context(self._products[i], self._site_base)

for i in merged if i < len(self._products)

]

return "CONTEXT FROM PRODUCT CATALOG:\n" + "\n".join(lines)Agent Memory

Before passing the user's message to BM25/embeddings we extract NLU entities (color, fabric, price range) and append them to the search query. This means "show me something in green silk under ₹5,000" resolves to {color: 'green', fabric: 'silk', max_price: 5000} and retrieves from a much tighter candidate set — dramatically reducing hallucinated product mentions.

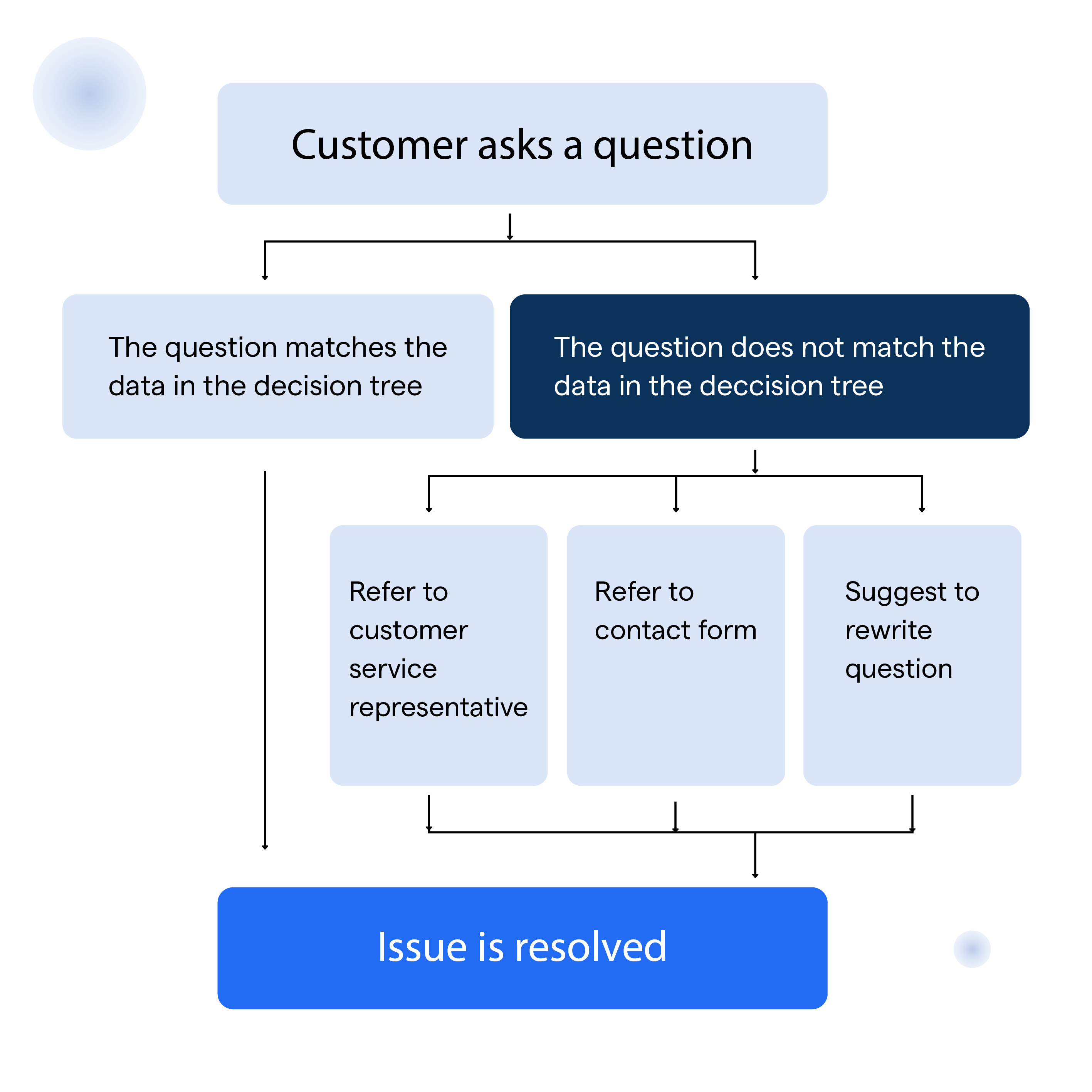

Workflow

A customer sends a WhatsApp message or image. The Omnichannel Gateway normalises the payload and resolves the org context. If the thread is human-controlled the message goes straight to the Agent Inbox; otherwise the AI pipeline runs: image → Vision AI → tags, or text → RAG retrieval → LLM reply. The Escalation Engine scores every turn; above threshold it flips the human_controlled flag, pauses AI replies, and pushes a WebSocket alert to the agent dashboard.

Results & Impact

"Before Unify, our team was manually copy-pasting product links at 11 PM. Now Kala handles 85% of queries end-to-end — she even matches customers to sarees when they send us a blurry reference photo from Pinterest."

85% Queries Resolved by AI

Only ~15% of conversations required human intervention, down from 100% manual handling before deployment.

24/7 Sales Coverage

Kala operates across all time zones on WhatsApp, Instagram, and Telegram with sub-2-second response times.

Multilingual by Design

Language detection and mirroring means customers can type in Telugu or Hinglish and receive a perfectly matched reply in kind.

Visual Product Matching

Vision AI enables customers to send a reference photo and get matched sarees from a 5,000+ product catalog — a feature impossible with keyword-only search.

Zero-Setup RAG Updates

The catalog can be refreshed from Supabase or a CSV drop. The RAG index rebuilds automatically on the next query with no server restart required.

Multi-Tenant Isolation

Every query is scoped by org_id, ensuring Brand A's catalog and conversations are never visible to Brand B.

About the Author

Parmeet Singh Talwar

AI Context Engineer

Apex Neural

Parmeet engineers context-driven AI that combines LLMs with structured backend architecture and multi-platform integrations. He builds AI-powered systems with secure OAuth, fine-tunes open-source LLMs, and integrates image and video generation into production pipelines. Focused on clean design and system reliability.

Contributors

Ready to Build Your AI Solution?

Get a free consultation and see how we can help transform your business.